Key Features

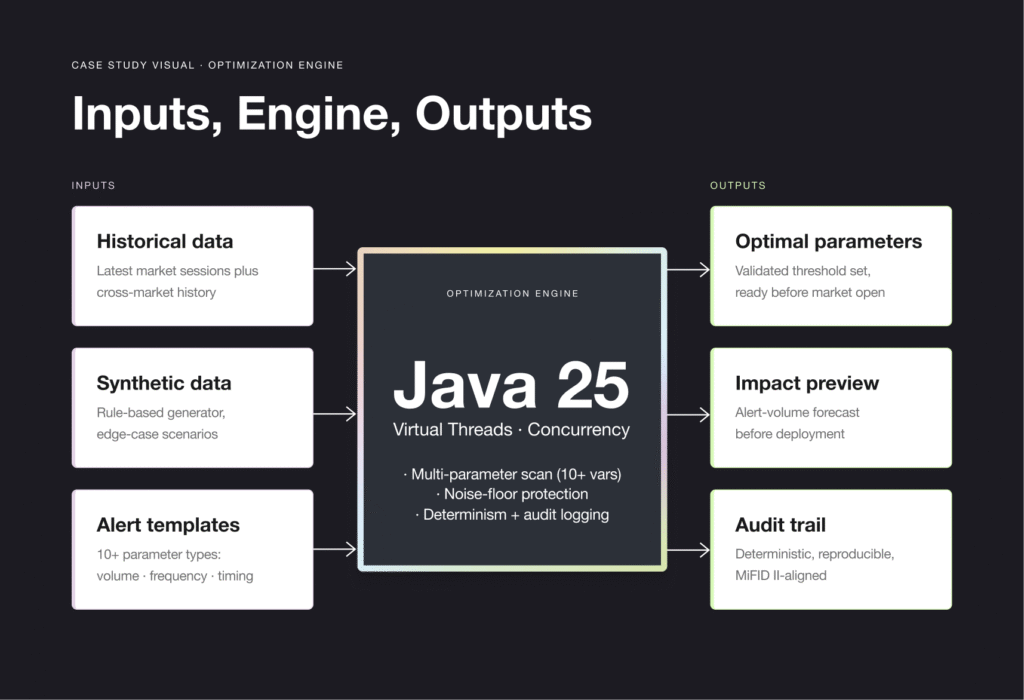

- Predictive Impact Transparency: A clear, data-backed preview of exactly how many alerts will be eliminated by specific parameter sets – so users understand operational impact before deployment.

- Universal Data Flexibility: Runs optimizations across diverse data samples – from the latest market sessions to historical cross-market data – supporting any alert type or trading anomaly pattern.

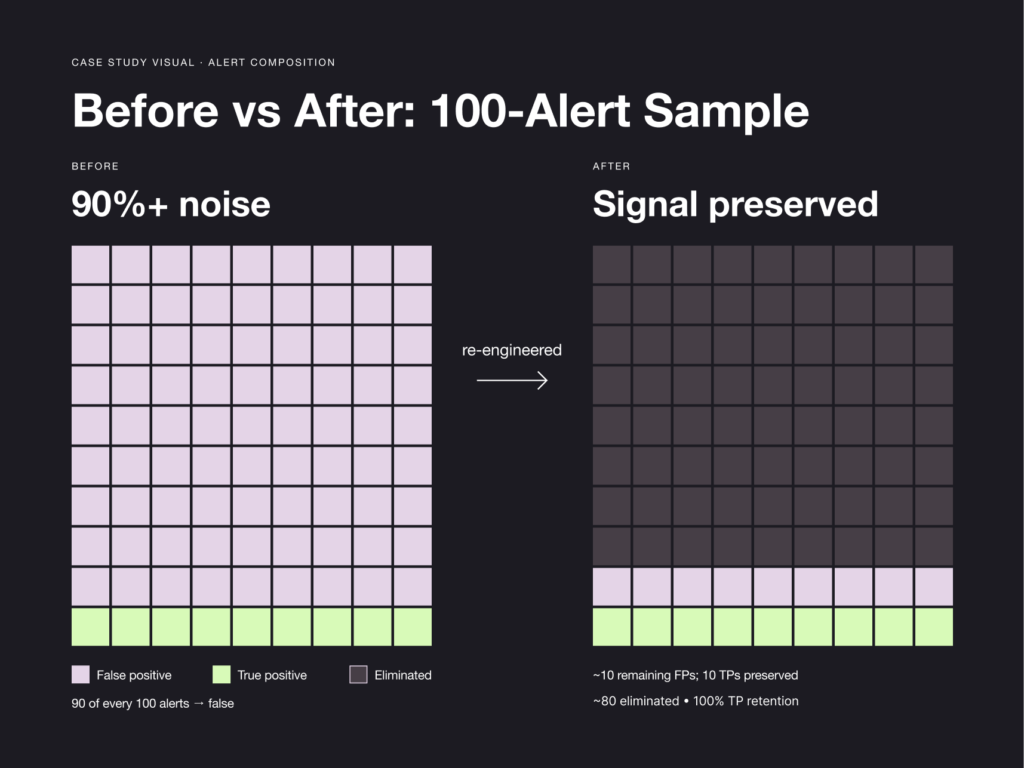

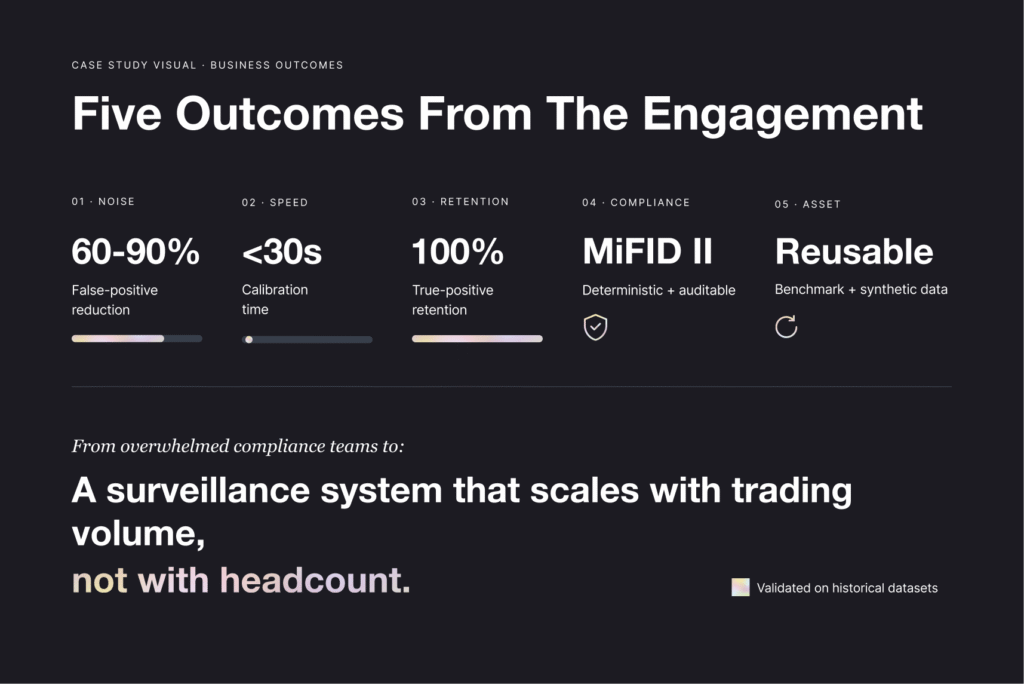

- Noise Floor Protection: A built-in boundary layer prevents aggressive noise reduction from overlapping with true positive signatures, maintaining 100% integrity of market abuse detection.

- Multi-Parameter Scalability: Simultaneously optimizes 10+ complex variables – a capability made possible by the high-concurrency architecture of the Java backend.

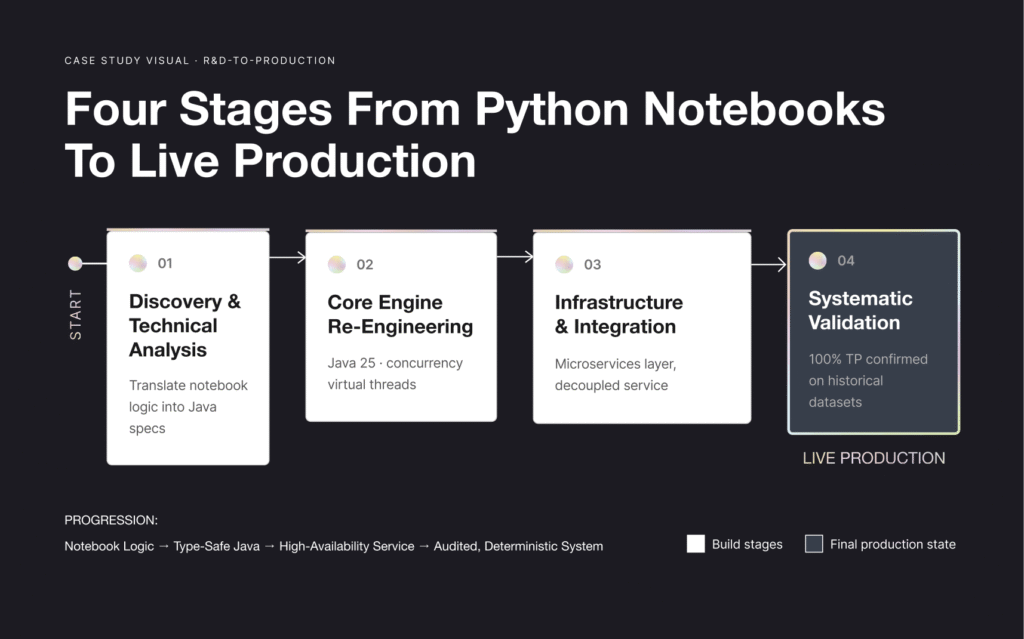

Key Engineering Decisions

1. High-Concurrency & Real-Time Perfomance

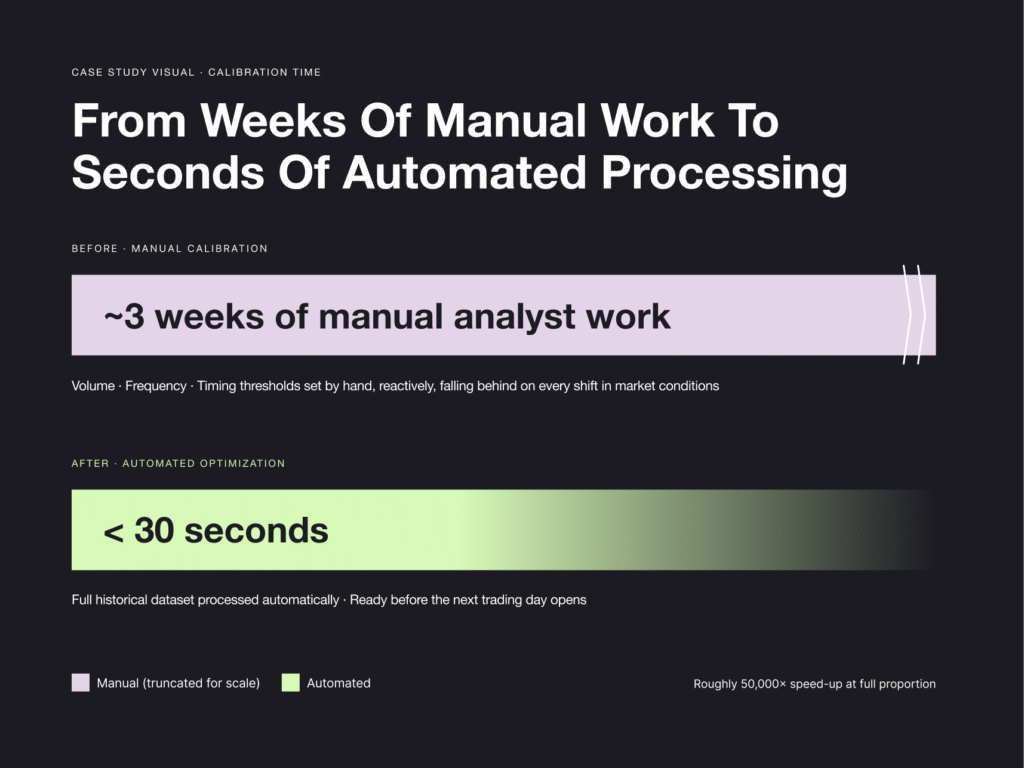

To achieve execution times under 30 seconds across massive datasets, we implemented multi-level parallelization using Java 25’s advanced concurrency models and virtual threads. This native approach eliminated cross-language overhead and enabled the engine to handle high-scale trading volumes in near real time.

2. Custom Algorithmic Refinement

The initial research provided only a basic algorithmic blueprint. We engineered a more sophisticated optimization engine from scratch, addressing complex edge cases overlooked in the experimental phase – extending standard optimization logic with custom heuristics to keep the engine stable under extreme market volatility.

3. AI-Accelerated Prototyping

We explored multiple algorithmic approaches, including Gradient-based and Evolutionary methods, using AI agents to prototype these alternatives and run high-speed comparative testing. This allowed us to select the most dependable approach without extending the development cycle.

4. Synthetic Data Engineering

Faced with restricted access to live production data, we developed a Synthetic Data Generator using rule-based logic to create diverse experimental environments – simulating different trading volumes, market complexities, and rare edge-case scenarios. This provided a measurable baseline for performance tracking and accelerated the validation cycle.

5. Regulatory-Grade Determinism

In a stock exchange environment, trade surveillance technology must meet mandatory compliance standards. We built a dedicated test suite focused on Robustness, Determinism, and Input Sensitivity, integrated directly into the CI/CD pipeline – so every update maintains strict reproducibility and aligns with MiFID II and global financial regulatory standards.

6. Automated Benchmarking Platform

We built a proprietary benchmarking environment running thousands of automated scenarios, with real-time monitoring of CPU, RAM, and execution time. The platform validates changes instantly and has become a reusable asset – a sandbox for future algorithmic work across trade surveillance software engagements.