Key takeaways

LLMOps tools in 2026 help teams deploy, manage, and improve large language models by automating, monitoring, and optimizing them. Choosing the right LLMOps tools guarantees reliable, secure, and scalable AI operations, and Teamvoy supports organizations through expert guidance and hands-on solutions.

Key points:

- LLMOps platforms provide critical support for deploying, monitoring, and optimizing large language models, addressing complexity, observability, scalability, and security.

- Selecting the right tool depends on your main need such as deployment speed, observability, or optimization, and should consider integration, scalability, and support.

- Open, modular platforms increase flexibility, prevent vendor lock-in, and simplify adapting as needs change.

- Strong observability and feedback loops help teams catch and fix issues early, keeping models reliable and safe.

- Teamvoy offers tailored LLMOps services, guiding from platform selection to optimization for better business outcomes.

What is LLMOps and why does it matter in 2026?

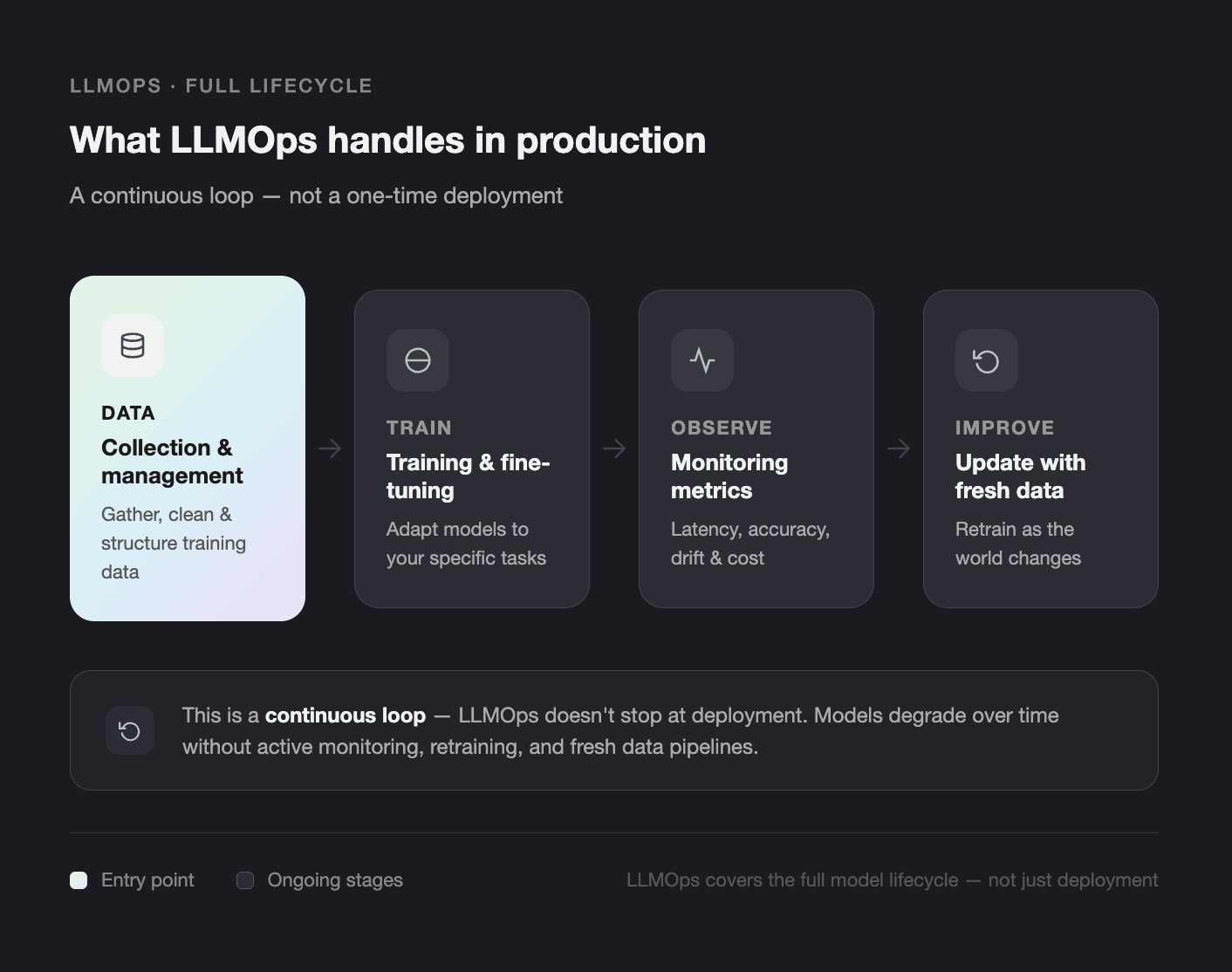

LLMOps (Large Language Model Operations) is the practice of deploying, managing, and optimizing large language models in production environments. Think of it as a helper that provides you with all the necessary tools and resources to develop LLM. Usually, it handles data collection and management, model training and fine-tuning, observing model’s metrics, as well as updating models with fresh data.

LLMOps tools in 2026 help teams deploy, monitor, and improve large language models (LLMs) at scale. The best LLMOps software combines easy deployment, strong monitoring, and powerful tools for keeping LLMs reliable and safe. In my work with clients at Teamvoy, I’ve seen firsthand how choosing the right LLMOps platform makes all the difference in business outcomes.

In this article, I’ll share what makes a LLMOps platform effective, how Teamvoy supports clients with LLMOps, and what LLMOps tools stand out in 2026 for different business needs.

Best LLMOPs Platforms In 2026

| Tool | Best for | Key feature | Deployment Type | Ease of use | Pricing |

| True Foundry | Enterprises building agentic AI systems | Cloud-agnostic control + full observability | Self-hosted/ Cloud | Medium | From $499 per month |

| Amazon Sage Maker | End-to-end ML lifecycle on AWS | Fully managed infrastructure + governance | Cloud (AWS) | Medium | Pay as you go |

| Lang Smith | Building and monitoring AI agents | Observability + fast agent development | Cloud | Easy | From $39 per seat per month |

| Databricks | Data-intensive AI applications | Lakehouse architecture + scalability | Cloud | Medium-Hard | Pay as you go |

| Knolli | No-code AI Platform | No-code + monetization tools | Cloud | Easy | $39-$399 per month |

| Vertex AI | ML + GenAI Platform | Gemini integration + unified ML stack | Cloud | Medium | Pay as you go |

| Hugging Face | Experimentation & model access | Huge model ecosystem + flexibility | Cloud / Self-hosted | Medium | $20-$50 per user per month |

| Replicate | Model Deployment | Quick model testing & APIs | Cloud | Easy | Pay as you go |

| Modal | Running scalable ML workloads | Serverless + GPU scaling | Cloud | Medium | From $250 per month |

| Pinecone | RAG & semantic search | High-performance vector search | Cloud | Easy | From $50 per month |

True Foundry

True Foundry is a self-hosted gateway and agentic LLMOps platform for secure, cloud-agnostic GenAI/ML deployment. True Foundry provides its own LLM gateway that connects to more than 250 open-source and proprietary LLMs, including OpenAI, Claude, Gemini, Groq, and Mistral. Think of it as a centralized control plane that helps enterprises use AI safely and cost-effectively.

True Foundry prioritizes data privacy and security. The data and models are housed within your cloud or in-premise infrastructure, so no data leaves your domain. True Foundry also gives you full control over how your agents behave, letting you track prompts, tool/model execution, LLM calls, workflow decisions, and execution paths in real-time.

Main Features

- AI Gateway that connects to more than 250+ LLMs and model routing

- MCP Gateway that lets you connect all authorized internal or third-party MCP servers

- Agent Gateway that acts as a centralized agent registry and lets you observe how the agents behave and track such metrics as agent latency, error rates, retries, and tool invocations

- Prompt management repository for tracking and testing prompts in one place

- AI deployment platform

- Production-grade training and fine-tuning for AI models with production-ready templates

Pricing

True Foundry has 4 pricing packages:

- A Developer package for testing and prototyping new ideas – $0

- A Pro package for small teams for $499/month

- A Pro Plus package for teams that work in highly-regulated industries and need stricter data control for $2999/month

- A custom package for medium and large enterprises

What Makes True Foundry Stand Out?

Choose True Foundry if you’re prioritizing cloud-agnostic flexibility, rapid deployments, and significant cost optimizations.

Amazon SageMaker

Amazon SageMaker is a fully managed AWS platform for building, training, and deploying ML models, with fully managed infrastructure and a toolkit. It provides an opportunity to train ML models using either built-in or custom algorithms, fine-tune pre-trained models, and adapt them to specific datasets and tasks.

One advantage of Amazon SageMaker is that it provides organizations in regulated industries with data security governance tools. These tools allow for managing user permissions and roles, tracking model versions, and managing model artifacts and metadata to ensure transparency.

Main Features

- Integrated development environment for model development

- Built-in or custom algorithms for model training

- Data labeling service for creating high-quality training datasets

- Real-time and batch interface to make real-time predictions

- Tools for monitoring ML model performance in real-time

Pricing

Amazon SageMaker uses a pay-as-you-go model where you pay only for the features you use. You can check out the relevant prices on their page.

What Makes Amazon SageMaker Stand Out?

Choose True Foundry if you need cloud-agnostic flexibility, rapid deployments, and significant cost optimizations.

LangSmith

LangSmith is a platform for agent engineering that lets you create, evaluate, and deploy your agents without writing code. It provides a quick and easy way to build custom agents and offers a variety of templates to start with. It integrates with 1000+ chat models, embedding models, tools, sandboxes, and checkpointers to let you quickly build your AI agent.

Main Features

- Standard model interface for each provider, so you can switch providers without changing the logic of the application and avoid vendor lock-in

- Tracing, monitoring, and observability features for monitoring model performance and collecting feedback

- Prompt templates

- Document loaders for third-party applications to import data from various tools and databases

Pricing

It offers 3 pricing packages:

- Developer package to start at $0 per month, then pay as you go;

- Plus package for $39 per seat per month;

- Enterprise package for custom pricing.

What Makes LangSmith Stand Out?

You can build a simple agent with just 10 lines of code.

Databricks

Databricks is a leading data and AI enterprise platform for building low-latency apps and agents directly on your enterprise data. With Databricks, you can build AI assistants and copilots, ML-powered applications, interactive data apps, or use it to automate manual, time-consuming business processes.

Databricks is built on a Lakehouse architecture that combines data lakes and data warehouses to reduce costs and simplify processing structured and unstructured data. It provides a single architecture for integration, storage, governance, sharing, analytics, and AI, making it easy to manage all your data in one place.

Main Features

- Lakehouse storage with open table formats, centralized governance, and AI data optimization

- Collaborative notebooks for Python, Scala, R, and SQL

- Integration with a variety of BI tools

Pricing

Databricks uses a pay-as-you-go approach with no up-front costs.

What Makes LangSmith Stand Out?

One of its advantages is that it processes large amounts of data and easily scales as your business grows.

Knolli

Knolli is a platform for building, scaling, deploying, and monetizing AI copilots within a single no-code workplace. It works in the following way: you describe what you want to create and Knolli turns it into a ready-to-launch framework. You can integrate your CRMs, file storage, and databases, and upload your documents, as well as integrate workflows.

Main Features

- Multi-agent architecture

- A variety of templates and pre-built copilots

- Custom branding

- Advanced analytics

- Workflow automation

Pricing

Pricing depends on the number of AI copilots, agents, and admins. The price varies from $39 to $399/month. Also, Knolli has an Enterprise package with custom solutions and more advanced security.

What Makes Knolli Stand Out?

One of the Knolli benefits is its custom branding, monetization tools, multi-source integration, and privacy-first content ownership.

Vertex AI

Vertex AI is Google Cloud’s unified AI platform that enables organizations to build, deploy, and scale machine learning models and AI applications. It supports the full ML lifecycle, from data preparation to model deployment, and integrates seamlessly with Google’s ecosystem.

Vertex AI is particularly strong in generative AI, offering access to Gemini models and tools for building advanced AI agents and applications with enterprise-grade infrastructure.

Main Features

- Unified platform for ML development and deployment

- Access to Gemini and other foundation models

- AutoML and custom model training

- Feature store for managing ML features

- MLOps tools for monitoring and managing models

Pricing

Vertex AI uses a pay-as-you-go pricing model based on usage of compute, storage, and APIs.

What Makes Vertex AI Stand Out?

It combines powerful generative AI capabilities with a fully managed ML platform, making it ideal for teams already working within the Google Cloud ecosystem.

Hugging Face

Hugging Face is an open-source AI platform and community that provides tools for building, training, and deploying machine learning models, especially in natural language processing. It offers access to thousands of pre-trained models and datasets through its model hub.

It is widely used by developers and researchers who want flexibility, transparency, and access to cutting-edge open-source models.

Main Features

- Model Hub with thousands of pre-trained models

- Transformers library for NLP, CV, and audio tasks

- Datasets library for easy data access

- Inference API for deploying models

- Spaces for building and sharing AI apps

Pricing

Hugging Face provides 3 pricing packages :

- Personal package for $9 per month

- Team package for $20 per month

- Enterprise package starting at $50 per month.

What Makes Hugging Face Stand Out?

Its open-source ecosystem and vast model library make it one of the most flexible platforms for experimentation and rapid development.

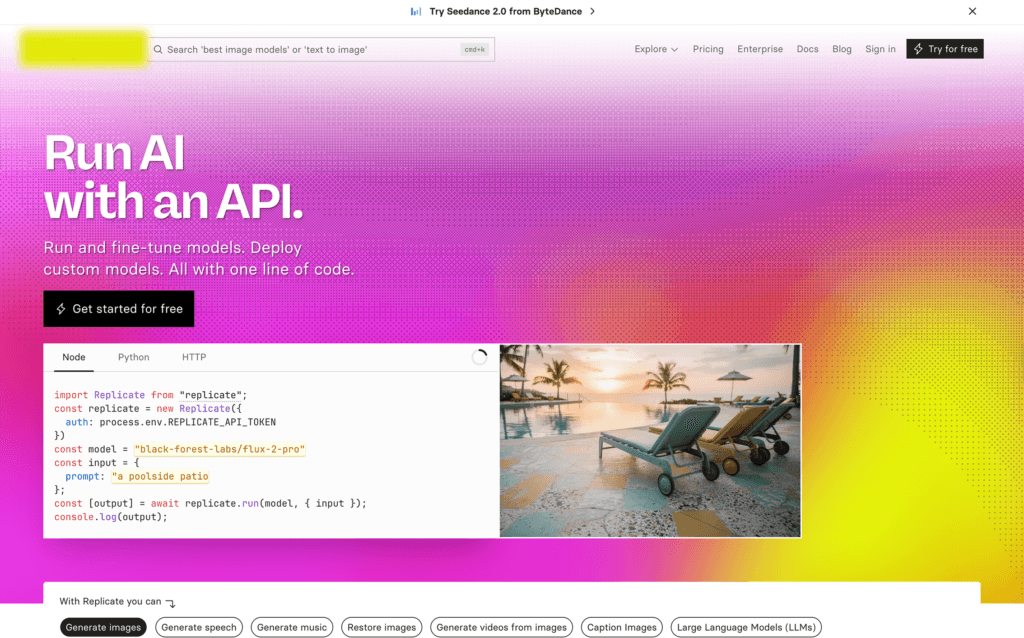

Replicate

Replicate is a platform that allows developers to run and deploy machine learning models in the cloud using simple APIs. It focuses on making open-source models easily accessible without requiring complex infrastructure setup.

With Replicate, you can quickly test and integrate models for tasks like image generation, text processing, and audio transformation.

Main Features

- Simple API to run ML models

- Support for a wide range of open-source models

- Automatic scaling and infrastructure management

- Versioned models for reproducibility

- Easy deployment and sharing

Pricing

It uses a pay-as-you-go pricing model. Some models are billed by hardware and time, others by input and output.

What Makes Replicate Stand Out?

It reduces the complexity of deploying and running ML models, making it ideal for quick prototyping and testing ideas.

Modal

Modal is a serverless platform designed for running AI and ML workloads in the cloud. It allows developers to execute functions, train models, and run inference jobs without managing infrastructure. Modal is optimized for performance-heavy workloads, including GPU-based tasks, and is particularly useful for scaling AI applications.

Main Features

- Serverless execution for ML workloads

- GPU support for high-performance tasks

- Autoscaling infrastructure

- Simple Python-based workflows

Pricing

It has 3 pricing plans:

- Free Starter plan for small teams and independent developers

- Team plan for $250

- Custom plan for a personalized price

What Makes Modal Stand Out?

Its serverless approach to AI infrastructure makes it easy to scale compute-intensive workloads without operational overhead.

Pinecone

Pinecone is a managed vector database designed for building AI applications that rely on semantic search, retrieval, and long-term memory. It is commonly used in retrieval-augmented generation (RAG) systems and AI agents.

Main Features

- Fully managed vector database

- High-performance similarity search

- Real-time indexing and updates

- Scalable architecture for large datasets

- Integration with popular AI frameworks

Pricing

It provides a Starter package for free for small applications. Also, it has a Standard package for $50 and an Enterprise package for $500 per month.

What Makes Pinecone Stand Out?

It provides an optimized, scalable solution for vector search, a necessary component of modern AI applications and agent systems.

Teamvoy’s Expert Approach to LLMOps

At Teamvoy, we don’t just recommend LLMOps platforms—we live the challenges with enterprise and fast-growing clients. Our LLMOps services support every step of the journey:

- Platform selection: We guide teams toward LLMOps tools that align with their workflows and growth plans.

- Integration and onboarding: Our engineers help connect the best LLMOps software to your data, pipelines, and cloud infrastructure, so you don’t have to start from scratch.

- Monitoring and improvement: We train teams to set up dashboards, alerts, and regular testing to catch issues before they become costly.

- Continuous optimization: We build reference architectures for RAG (retrieval-augmented generation), agent workflows, and more to help models get better over time.

A recent client found that switching to the LLMOps platform we recommended reduced model downtime by 70% and increased maintainers’ productivity. These results come from hands-on, collaborative work — not just picking from a list.

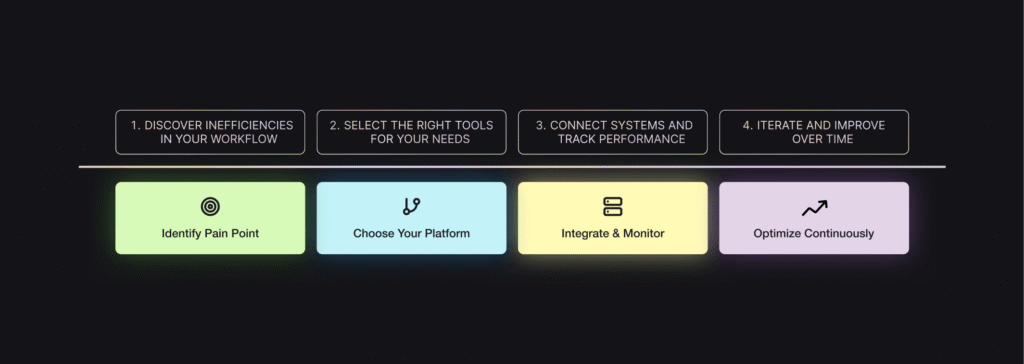

Best Practices and Recommendations from Teamvoy

From working hands-on, here’s what I recommend to any team planning an LLMOps rollout:

- Don’t chase buzzwords — start with a real pain point, like slow deployments or unreliable model outputs.

- Use open platforms (when possible) to avoid lock-in and let your stack evolve.

- Invest in observability early. It’s always easier to tune models when you have clear logs and metrics.

- Plan for optimization from day one. Set up feedback loops and regular prompt testing, not just after you launch.

- Build your LLM stack for change. LLMOps moves fast; today’s best LLMOps software may get outpaced in a year.

In one engagement, we built a pipeline using LlamaIndex, OpenLLMetry, and custom guardrails. Three months after launch, when the client wanted to add multi-provider support, our modular approach saved 40 percent of the expected development time.

Partnership, steady iteration, and clear measurement keep LLM deployments healthy and future-proof. That’s what sets the best teams apart.

Conclusion

To choose the right LLMOPs platform, get clear on what you’re actually building:

- Simple AI feature (chatbot, content generation) – you don’t need heavy infrastructure, you need to quickly test your idea

- AI agents / multi-step workflows – you need orchestration and observability

- Enterprise AI system with sensitive data – you need governance and self-hosting

- Data-heavy AI applications (RAG, analytics) – you need strong data infrastructure

Enterprise lLLMOps platforms like True Foundry and LangSmith focus on control and observability, while Amazon SageMaker and Vertex AI offer full-scale infrastructure for enterprise use cases. At the same time, tools like Replicate or Modal make it easier to move fast and experiment.

Choosing the best LLMOps software is about matching technology with your goals. Start with what you actually need, avoid overcomplicating your stack too early, and prioritize flexibility. With the right foundation in place, you’ll be able to iterate faster, control costs, and build AI systems that deliver real business value.