AI agent costs balloon in three predictable places — token burn from looping workflows, retries from unreliable runs, and migration fees from vendor lock-in. Reddit practitioners report 70-120x cost spikes on multi-step agents, and reliability uplifts from 80% to 99.9% that roughly triple spend. Cap loops, benchmark frameworks, and use abstraction layers like LiteLLM to keep AI agent costs predictable in 2026.

Key takeaways:

- AI agent costs hide in three compounding places: looping token burn, reliability retries, and vendor lock-in. Ignoring any one of them turns a $10 prototype into a $1,200 monthly line item on the same workload.

- Multi-step agents can spike from 2,000 to 120,000 tokens on a single task. Production benchmarks show a 70x cost spread between a linear LLM call and a planning-heavy agent.

- Pushing reliability from 80% to 99.9% roughly triples cost. Structured prompting with DSPy or Guidance, plus runtime guardrails like NVIDIA NeMo, cut that tax by ~30%.

- Framework choice changes per-task cost by 6x. LangChain runs ~$0.50, CrewAI ~$0.30, AutoGen ~$0.15, and custom DSPy stacks ~$0.08 on equivalent workloads — always benchmark against your real traffic.

- Vendor lock-in is the most expensive mistake. Wrap every model call behind an abstraction layer (LiteLLM, Haystack) and default to open-weight models (Llama, Mistral, Qwen) for any workload over 1,000 calls per day.

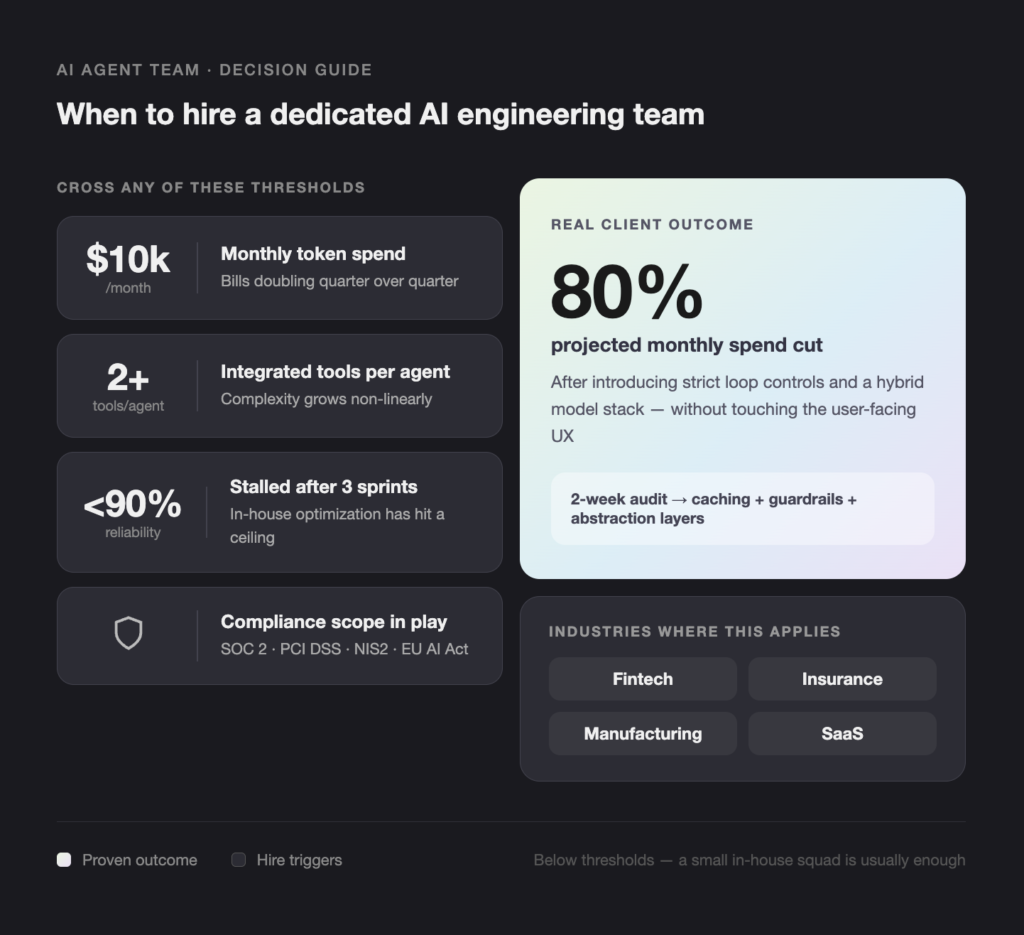

- Bring in a dedicated AI engineering team when monthly token spend tops $10,000, reliability stalls below 90% after three sprints, or compliance scope (SOC 2, PCI DSS, NIS2, EU AI Act) is in play.

Introduction

AI agents are now embedded in customer support, claims handling, code review, and shop-floor automation across fintech, insurance, and manufacturing. Most pilots launch on a single LLM provider, hit early wins, then stall when the production invoice arrives. This post is for CTOs, engineering directors, and product leads who want a peer-level read on where AI agent budgets actually leak — and what to do before the next quarterly review. The signal here is pulled from Reddit threads, production benchmarks, and Teamvoy’s own client deployments.

What drives the hidden costs of AI agents — and why does it matter?

The hidden costs of AI agents come from three compounding sources: token-heavy multi-step loops, unreliable runs that retry until they succeed, and vendor APIs that turn migration into a rewrite. Each one is small in isolation. Together they can push a $10 prototype into a $1,200 production line item on the same workload.

A practitioner on r/LocalLLaMA shared a code review agent that grew from 2,000 tokens on a simple bug fix to 120,000 tokens after self-improvement loops kicked in. Run that on 1,000 daily tickets and the bill jumps 120x. Production benchmarks consistently show a 70x spread in cost per task between a linear LLM call and a planning-heavy agent doing the same job.

Common symptoms in production:

- Token usage that grows non-linearly with task complexity

- Retry rates above 15% on tool-calling agents

- A single vendor pricing change forcing a re-architecture

- Engineers writing prompt formats that don’t port to other models

- Monthly cost variance above 30% with no change in traffic

These are not edge cases. They show up in roughly half of the AI agent codebases we audit at Teamvoy. For a wider view of what to instrument before you scale, see our guide on how to build an AI development workflow.

How do you solve AI agent cost problems without breaking reliability?

Cap the loop, benchmark the framework, and abstract the vendor – in that order. Loop control returns the fastest budget. Benchmarking exposes which framework is worth standardizing on. Abstraction protects the next 18 months of work.

1. Cap token burn at the loop level

Aggressive context summarization every 2-3 steps trims 40-60% of tokens on long-running agents, based on production reports cross-checked against client deployments. Combine that with hard early-stop rules: if an agent retries five times without progress, escalate to a human or kill the run.

A second saving comes from model right-sizing. Route simple classification or extraction to an open-weight model (Llama 3, Mistral, Qwen) and reserve frontier models for planning steps. That single change usually cuts token spend 50-70% with no measurable quality drop.

2. Pay the reliability tax up front

Going from 80% to 99.9% reliability roughly triples cost, mostly from retries and fallback chains. Two interventions cut the retry rate before it gets expensive:

- Structured prompting with DSPy or Guidance reduces tool-call errors by about 30%.

- Open-source guardrails like NVIDIA NeMo Guardrails inspect calls in real time and block bad tool invocations before tokens are spent.

Tie every agent to a per-task budget cap. If the agent can’t hit its goal inside the cap, it hands off. That one rule prevents most runaway behavior.

3. Compare frameworks against your real workload

Production benchmarks show meaningful gaps between popular agent frameworks. The numbers will shift with your domain — a fintech KYC agent looks nothing like a manufacturing MES agent — but the spread is consistent.

| Framework | Token efficiency | Reliability | Lock-in risk | Cost per task |

| LangChain | Low | ~75% | High (OpenAI default) | $0.50 |

| CrewAI | Medium | ~85% | Medium | $0.30 |

| AutoGen | High | ~90% | Low | $0.15 |

| Custom (DSPy) | Highest | ~95% | None | $0.08 |

Always profile against your own traffic before standardizing. A framework that wins on a benchmark suite can lose on your specific tool-call patterns.

4. Avoid vendor lock-in early

Deep single-vendor integration is the most expensive mistake we see. One Reddit thread referenced $100,000+ to retrain prompts and tool schemas after a planned switch from a frontier API to a local Llama deployment.

Three rules:

- Keep prompt templates, tool schemas, and evaluation sets in version control, decoupled from any vendor SDK.

- Wrap every model call behind an abstraction layer (LiteLLM, Haystack, or a thin internal SDK). Our roundup of LLMOps tools for building AI platforms in 2026 covers shortlist candidates.

- Default to open-weight or self-hosted models for any workload that runs more than 1,000 times a day.

When should you hire a dedicated team to build production-grade AI agents?

Bring in a dedicated team when your agent project crosses any of three thresholds: monthly token spend above $10,000, more than two integrated tools per agent, or compliance scope (SOC 2, PCI DSS, NIS2, EU AI Act). Below those, a small in-house squad with strong prompt discipline is usually enough.

Signals that justify outside help:

- Token bills doubling quarter over quarter

- Reliability stuck below 90% after three sprints

- Roadmap requires swapping providers within 12 months

- Regulated industry (fintech, insurance, healthtech) with audit obligations

- Fewer than two in-house engineers with production LLM experience

Teamvoy builds vendor-agnostic agent stacks for fintech, insurance, manufacturing, and SaaS clients in the US and the Nordics. A typical engagement starts with a two-week audit of token flows, reliability metrics, and migration risk, followed by a build phase that adds caching, guardrails, and abstraction layers. One recent client cut projected monthly spend by 80% after we introduced strict loop controls and a hybrid model stack — without touching the user-facing UX.

If you’re weighing whether to expand the in-house team or partner externally, our breakdown of staff augmentation vs. outsourcing covers the trade-offs at each team size.

Conclusion

AI agents repay quickly when the architecture remains disciplined. Loop control, hard spend caps, and an abstraction layer are the three habits that separate a $1,200 month from a $10 one on the same workload. Frameworks and providers will keep churning, so the teams that win are the ones designed to swap stacks without rewriting the application. Audit your current agents this quarter, publish per-task cost benchmarks, and decouple from any single vendor SDK before the next pricing change.

Next steps

- Run a one-week audit on your highest-traffic agent’s token usage and retry rate.

- Add a per-task budget cap and an early-stop rule before scaling further.

- Book a 30-minute call with a Teamvoy delivery lead to benchmark your stack.

For raw signal from practitioners, r/MachineLearning and the Stanford AI Index Report are worth bookmarking.