This post is written for CTOs, engineering leads, and product owners who are watching their teams adopt AI coding tools faster than their security processes can keep up. If you work in fintech, healthcare, insurance, or any sector with a regulator, the answers in this guide matter more for you than for a weekend hacker. By the end, you will have a clear picture of what vibe coding actually is, where it breaks, what the Moltbook leak teaches us about process, and how to use AI in production without taking on more risk than you can defend.

Key takeaways:

Vibe coding means using AI to generate most of the code from plain language prompts, then shipping fast, but the legal and security responsibility still sits fully on your organization. The real risk is not using AI itself, it is using it without security design, human review, and clear ownership, especially in regulated fields like fintech, healthcare, and insurance.

- AI can speed up work on boilerplate, scaffolding, and prototypes, but must not replace secure architecture, threat modeling, and compliance checks.

- AI generated code often contains more security flaws than human code, including exposed secrets, weak authentication, and unsafe logging of sensitive data.

- Incidents like the Moltbook API key leak show that the problem is weak processes and missing security gates, not the mere use of AI agents.

- Free AI code still creates long term costs for maintenance, testing, and audits, and can turn into hidden technical debt if architecture is unclear.

- A safe approach uses AI as a power tool with human in the loop reviews, strong security pipelines, clear policies on where AI is allowed, and training for developers on AI specific risks.

| Topic | Key Insight | Why It Matters | Action Item |

|---|---|---|---|

| Meaning of vibe coding | Vibe coding is AI first coding from prompts instead of detailed specs and design | It changes how code is created, but not your legal and security duties | Treat AI as a helper, keep humans responsible for architecture and security |

| Main risk source | The danger comes from weak security, rushed reviews, and missing ownership, not from AI itself | Regulators and customers only care if systems are safe and compliant | Assign clear security owners and review steps for all AI generated code |

| Security flaws in AI code | Studies show AI code often has more vulnerabilities and exposed secrets than human written code | Shipping AI code without review can lead to leaks, fraud, and fines | Use static analysis, dependency scans, and focused security reviews on AI output |

| Real world failure (Moltbook) | AI agent written code leaked about 1.5 million API keys due to poor secret handling and no security gate | Similar patterns can expose banking tokens, health data, or insurance records | Enforce secure secret storage, key rotation, and security gates before deployment |

| Hidden costs | AI generated prototypes can be hard to maintain, test, and audit if they lack clear structure | Technical debt and compliance gaps can become more expensive than building safely | Plan refactors, documentation, and testing for AI built parts before going to production |

| Governance and policy | Clear rules on where and how to use AI reduce risk more than blanket bans or blind adoption | Policy gives teams speed in low risk areas and protection in high risk ones | Define zones where AI is allowed, where human review is mandatory, and what data never goes into AI tools |

| Secure AI lifecycle | A secure process includes risk assessment, human led architecture, selective AI use, reviews, testing, and gated deployment | This aligns AI development with regulatory expectations in fintech, healthcare, and insurance | Build a hybrid workflow where AI handles safe tasks and humans own critical flows and approvals |

Introduction

AI agents are now embedded in customer support, claims handling, code review, and shop-floor automation across fintech, insurance, and manufacturing. Most pilots launch on a single LLM provider, hit early wins, then stall when the production invoice arrives. This post is for CTOs, engineering directors, and product leads who want a peer-level read on where AI agent budgets actually leak — and what to do before the next quarterly review. The signal here is pulled from Reddit threads, production benchmarks, and Teamvoy’s own client deployments.

Vibe Coding Meaning And Why It Is Not What Gets You Sued

A founder ships a working prototype over the weekend. No engineering team, no sprint planning, no architecture review. Just a chat window, a few well-phrased prompts, and an AI that writes every line of code. The demo is slick. The investor call goes well. The product launches. Then the API keys leak.

Vibe coding meaning in simple terms is this: you describe what you want in plain language, AI writes most of the code, and you ship fast. The good news, vibe coding itself is not illegal. The bad news, if that AI generated code mishandles customer data, leaks API keys, or breaks regulations, your company carries the liability.

Courts, regulators, and customers do not care what is vibe coding or who wrote the code, a human or an AI. They care whether their data was safe, whether your system was secure, and whether you followed the rules.

At Teamvoy, we use AI every day to speed up development. But we never let vibe coding replace secure design, human review, or compliance checks. AI is our power tool, not our autopilot. The real risk is not vibe coding, it is weak security, rushed processes, and missing ownership.

This post gives vibe coding explained in practical terms, walks through real-world failures like the Moltbook API key leak, outlines AI code security risks, and shows how we build secure AI-assisted systems for fintech, healthcare, and insurance clients.

What is vibe coding and why does it matter?

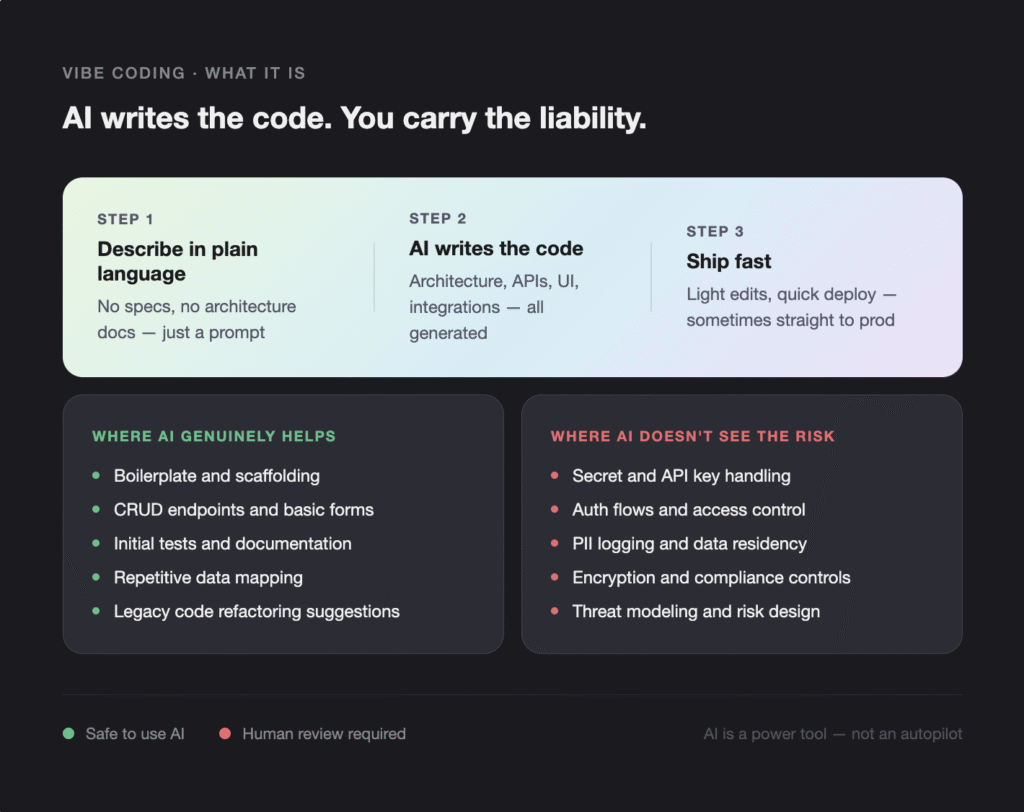

Vibe coding is a style of software development where natural language prompts replace detailed specs, an AI agent writes and wires the code, and humans lightly edit before deploying. It matters because it has moved from a hobbyist trick to a production pattern inside companies that handle regulated data, and it is exposing gaps in security ownership that traditional engineering processes never had to address.

What is vibe coding

Answer first, what is vibe coding, it is a style of software development where:

- You write natural language prompts instead of detailed technical specs.

- AI tools propose architecture, write code, and glue components together.

- Humans lightly edit and then deploy.

In other words, vibe coding meaning is trusting AI to fill in the gaps based on “the vibe” of what you asked, not on a clear, reviewed design. You might say, “Build me a subscription SaaS app with Stripe billing and user roles,” and let the agent handle most of the steps.

The phrase “vibe coding explained” often appears in videos where someone claims, “I built a full SaaS in 48 hours with AI.” These demos make it look like you can skip deep engineering work. But they rarely show:

- Who threat modeled the system.

- Who checked how secrets are stored.

- Who verified compliance for PII.

We will come back to those gaps, because they are where legal and security trouble really starts.

Vibe coding explained in practice

GIn real teams, vibe coding workflows usually look like this:

- Prompt AI to design architecture for a backend or service.

- Let AI scaffold controllers, models, and APIs.

- Ask AI to generate frontend pages, state management, and UI logic.

- Let AI wire in third party services like payment gateways or analytics.

- Do minimal manual edits.

- Deploy quickly, sometimes straight to production.

On the surface, this feels like superpowers. Suddenly non engineers can “build apps,” and small teams can claim to ship features at startup speed. That is why so many leaders hear stories about “build a full app in a weekend” and ask their teams, “Why are we not doing this?”

The promise of vibe coding

Vibe coding meaning sits on a promise of speed and cost savings:

- Build MVPs in 48 hours just by prompting.

- Cut engineering costs by shifting human effort to AI.

- Let product teams experiment faster with fewer engineers.

From our perspective at Teamvoy, there is a real upside. Used well, AI shortens the distance between idea and working prototype. In regulated industries, this can help you explore concepts without burning months of engineering capacity.

Where vibe coding genuinely helps

We use AI every day. When we talk about vibe coding explained in a realistic way, we always separate safe uses from risky ones. AI is genuinely helpful for:

- Generating boilerplate and scaffolding.

- Creating CRUD endpoints and basic forms.

- Suggesting refactors of legacy code.

- Producing initial tests and documentation.

- Assisting with repetitive data mapping and formatting.

We recently asked an AI assistant to generate initial API client wrappers for an internal tool. It saved our team hours. But then our engineers reviewed the code, hardened authentication, added proper logging and error handling, and ran security scans.

That mix matters. The tool gave us speed, humans gave it safety. This is the heart of secure AI assisted development. For more on how we set up AI-assisted workflows on real projects, see our guide to the MVP development process.

The Hidden Limitations of Vibe Coding

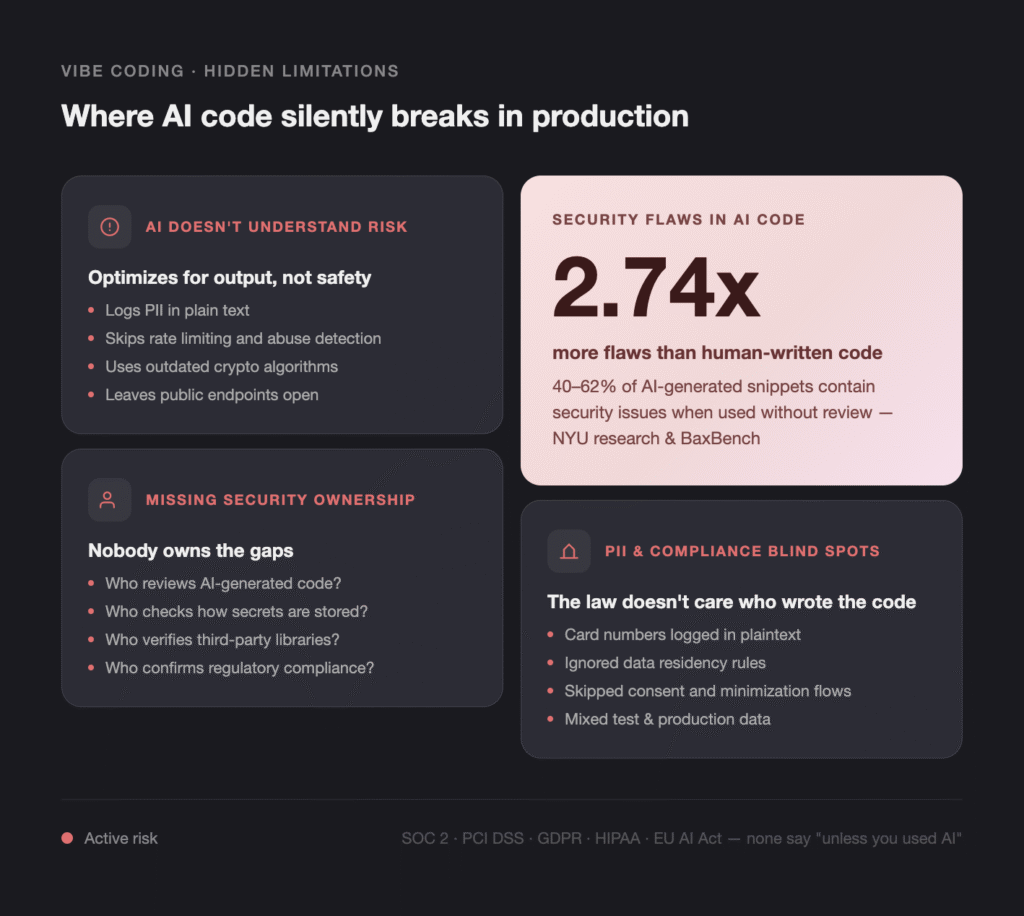

The main problem is not that AI cannot write code. It is that AI does not understand risk, context, or law. Vibe coding limitations appear precisely at the point where security, compliance, and judgment are required.

AI does not understand risk and context

AI models do pattern matching on code. They do not “understand” your business risk, your regulators, or your customers. That creates serious AI software development risks.

AI can generate code that looks clean, passes basic tests, and works on demo data. Yet still:

- Logs sensitive PII in plain text.

- Skips rate limiting and abuse detection.

- Leaves open S3 buckets or public endpoints.

- Uses outdated crypto algorithms.

Those are classic AI code security risks, because the system optimizes for “working output,” not for secure architecture. Research from NYU and others found that between 40 and 62 percent of AI generated code snippets included security flaws.

So vibe coding meaning may sound simple, but vibe coding limitations become obvious as soon as you ask, “Is this safe in production for real users and regulators?”

Missing security ownership

When teams adopt vibe coding, we often see the same questions left unanswered:

- Who owns security review for AI generated code.

- Who checks how secrets, tokens, and API keys are stored.

- Who ensures encryption and access control match your regulations.

- Who verifies that third party libraries suggested by AI are safe.

Without clear answers, AI code security risks stack up quietly. The bigger problem is not one piece of bad code, it is volume. AI can generate more code than your existing review process can handle.

Research shows that AI generated code can have 2.74 times more security flaws than human written code, especially when used naively, as summarized in security benchmark suites like BaxBench. That is one of the most important vibe coding limitations.

The judgment gap

We call this the judgment gap. AI accelerates creation, but it does not accelerate judgment.

AI will happily reuse insecure patterns it “learned” from public repositories. If those patterns involve:

- Weak input validation.

- Insecure authentication.

- Direct SQL queries without sanitization.

It will duplicate them across your codebase. The risk is not just one bug. It is consistent propagation of anti patterns at scale. That is why AI code security risks feel subtle at first and then explode all at once in production.

PII and compliance blind spots

In fintech, healthcare, and insurance, mishandling PII is not a minor bug. It is a regulatory problem. Vibe coding explained in that context means recognizing how AI generated code can:

- Log full card numbers or medical IDs in plaintext logs.

- Store sensitive fields without proper encryption.

- Ignore regional data residency rules.

- Skip consent flows or data minimization.

- Mix test and production data in unsafe ways.

Frameworks like SOC 2, PCI DSS, GDPR, DORA, HIPAA, and the EU AI Act all share a theme: your organization is responsible for protecting data, regardless of tool choice. The law does not say “unless you used AI.”

So when you think about vibe coding limitations, remember, they are not only technical. They are legal and operational too.

When Agent Written Code Leaks 1.5 Million API Keys

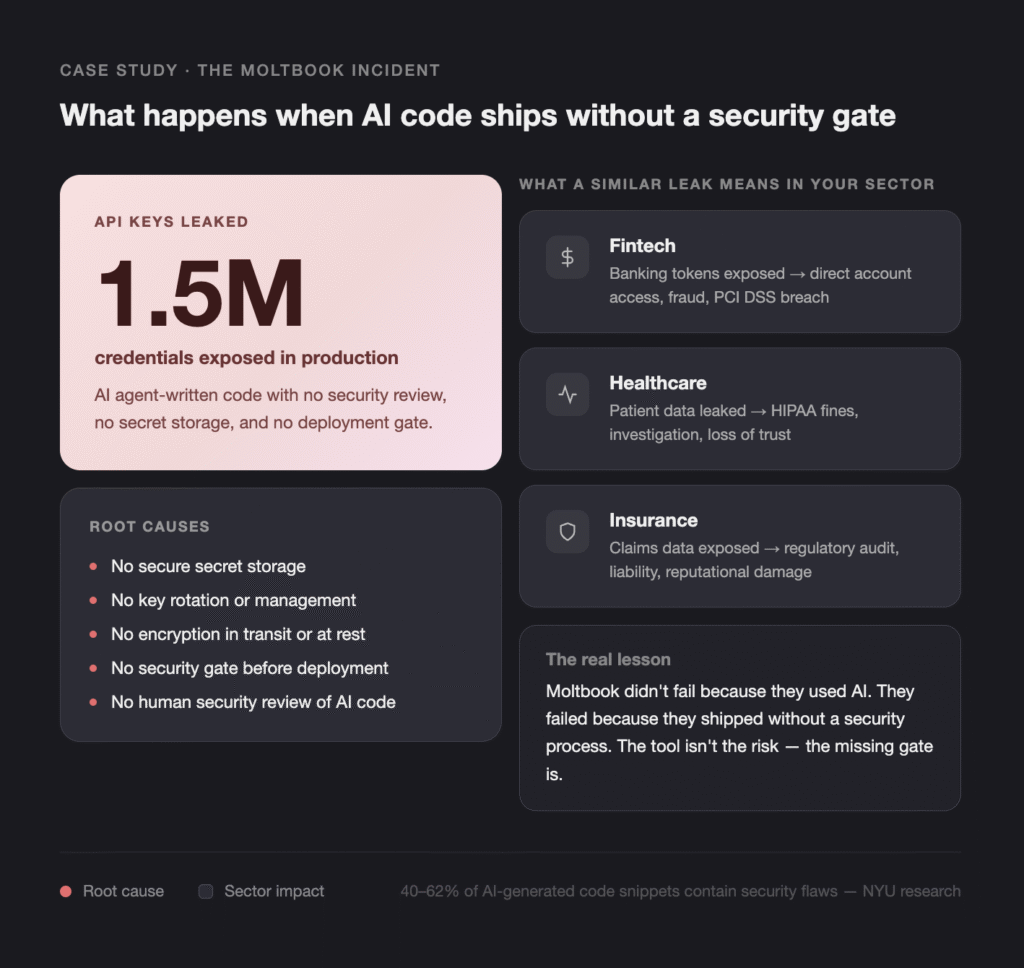

The Moltbook incident is a practical example of what happens when vibe coding meets weak security. It is not a horror story about AI, it is a warning about process.

Moltbook incident overview

Here is the short version. Moltbook used AI agents to write and manage parts of their system. These agent written pieces of code included logic around handling secrets and API keys.

Because the code was not properly reviewed, and the security design itself was flawed, the system ended up leaking about 1.5 million API keys. The root causes included:

- No secure secret storage.

- Inadequate key management and rotation.

- Lack of encryption in transit or at rest for some keys.

- No security gate before deployment.

This is a classic example of AI generated code security issues. The agent was not malicious. It simply followed patterns that were not safe for a real world environment.

Why this matters beyond one company

The critical point, Moltbook did not get into trouble “because they used AI.” They got into trouble because they shipped a system with severe security gaps.

The pattern is simple:

- No proper security architecture.

- No human security review.

- No security gating in CI or release process.

Any organization that adopts vibe coding meaning “let the AI handle the hard parts” without guardrails is at risk of repeating this pattern. If your system handles credentials, banking tokens, healthcare data, or insurance claims, then an API key leakage incident could mean direct access to production systems, customer accounts, or sensitive records.

Lessons for regulated industries

In fintech, healthcare, and insurance, the consequences of AI code security risks can be severe:

- Massive credential leaks lead to account takeover and fraud.

- PII exposures lead to regulatory fines and investigations.

- System failures can harm real customers in real life situations.

The lesson is not “never use AI.” The lesson is “never deploy AI generated code without a proper security and compliance review.”

At Teamvoy, we often help clients add structured reviews around their existing AI experiments. One financial client came to us with an AI built internal tool. We found:

- Hardcoded secrets checked into the repo.

- Missing role based access control.

- Logs containing partial card details.

It was not vibe coding meaning itself that was the problem. It was AI code with no security owner. Once we brought in security checks, static analysis, and manual review, they could keep using AI safely. For more on how we approach this in regulated environments, see our overview of fintech software development.

How do you solve AI code security risks? A practical playbook

The short answer: treat AI as a power tool with explicit limits, gate every deployment with a security review designed for AI volume, and assign human owners for every layer the model touches. The longer answer is below, organized as a six-step process you can implement this quarter.

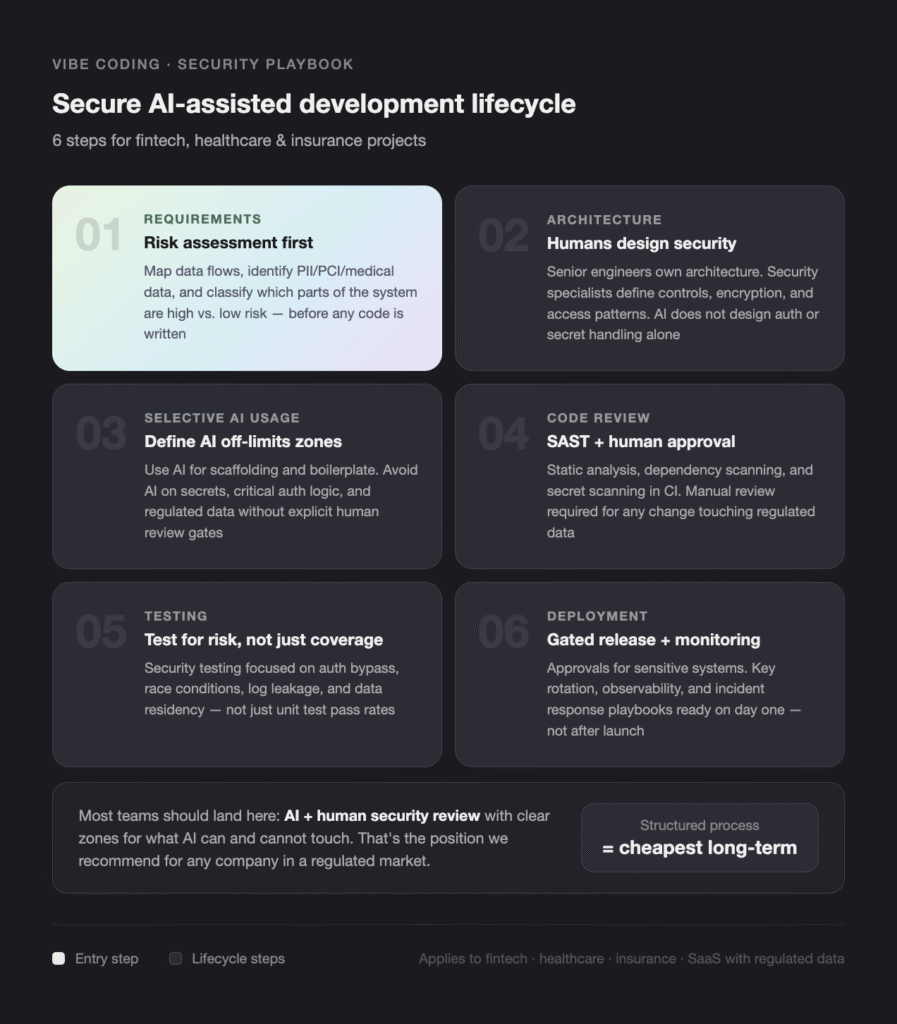

Step by step: secure AI assisted development lifecycle

This is the lifecycle we run on fintech, healthcare, and insurance projects.

- Requirements and risk assessment. Understand domain, data flows, and regulatory context. Identify PII, PCI, or medical data early. Decide which parts of the system are “high risk” and which are “low risk” before any code is written.

- Architecture and security design. Senior engineers define architecture. Security specialists design controls, encryption, and access patterns. AI is not allowed to design auth flows, secret handling, or data persistence by itself.

- Selective AI usage. Use AI for scaffolding, boilerplate, and repetitive code. Avoid AI for direct handling of secrets and critical auth logic without human review. Define explicit “AI off-limits” zones in your repo.

- Code review and analysis. Manual code review focused on security. Static analysis (SAST), dependency scanning, and secret scanning integrated in CI. Human approval required for any change touching regulated data.

- Testing and validation. Unit and integration tests, security testing, and where needed, penetration testing. Test plans focus on risk, not just coverage: auth bypass, race conditions, log leakage, data residency.

- Deployment with security gates. Approvals for changes touching sensitive systems. Observability and logging configured before go live. Key rotation, monitoring, and incident response playbooks ready on day one.

In-house vs. AI agent vs. AI plus human review: trade-offs

| Approach | Speed | Security risk | Compliance fit | Best for |

|---|---|---|---|---|

| Fully in-house, no AI | Slowest | Lowest (if team is mature) | High | Highly regulated, very high stakes systems |

| Pure vibe coding, no review | Fastest | Highest | Very low | Throwaway prototypes, internal demos |

| AI plus human security review | Fast | Low when gated | High | Production systems in fintech, healthcare, insurance, SaaS |

| AI plus offshore reviewer with no security context | Fast | Medium to high | Low | Avoid for regulated data |

Most teams should land on row three: AI plus human security review, with clear zones for what AI can and cannot touch. That is the position we recommend for any company we work with in a regulated market. For a deeper view of how to structure the engineering team around this, see staff augmentation vs. outsourcing.

When should you bring in a partner like Teamvoy?

You should bring in a delivery partner when AI has scaled your code output past the size of your existing security review capacity, when you are entering a regulated market for the first time, or when an incident has already shown you the gap between speed and oversight. The signal is not “we want to use AI.” It is “we are using AI and our review process has not caught up.” Buying signals:

Talk to a partner if any of the following is true.

- You are launching a fintech, healthtech, or insurance product and you want secure AI assisted development from day one.

- You have an internal AI built prototype and need it hardened to production grade before customers see it.

- You are responding to new regulations (DORA, EU AI Act, NIS2, NYDFS Part 500) and need to prove security by design.

- Your engineering team is shipping AI generated code faster than your security reviewers can audit.

- You have already had a near miss, leaked secret, or auditor finding tied to AI generated code.

What you get

When you engage Teamvoy on this, you get senior engineers who own architecture, security specialists who own threat modeling, and a delivery lead who owns timeline and accountability. We work as a dedicated development team or as embedded experts inside your existing org, depending on what you need. For more on how we structure those engagements, see our breakdown of staff augmentation vs. outsourcing.

The trade-off to name: a structured AI plus human review process is not the cheapest option on day one. It is the cheapest option across the life of the system, because you do not pay for the leak, the audit finding, or the rebuild.

How To Use AI For Coding Without Getting Burned

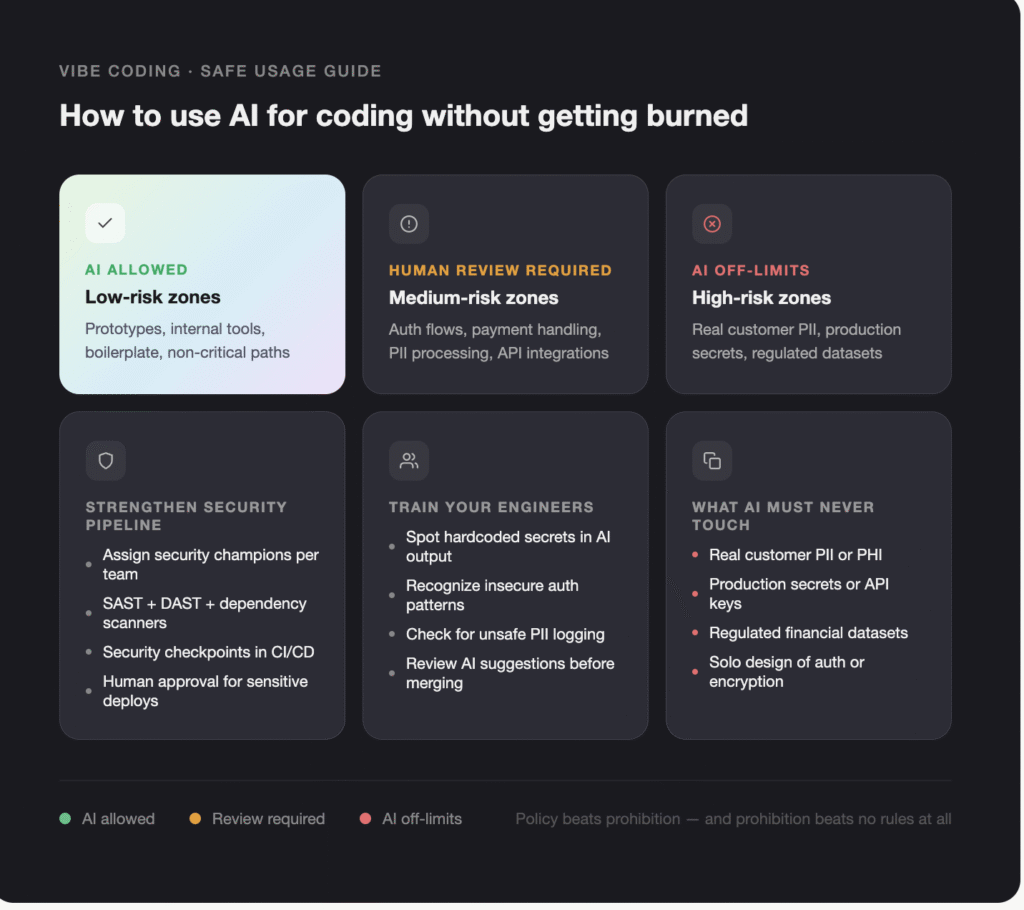

You do not need to ban vibe coding. You need to put structure around it. Policies, training, and guardrails turn AI code from a liability into an asset.

Establish policy, not prohibition

Instead of “no AI” or “AI everywhere,” define clear rules:

- Where AI can be used, for example prototypes, internal tools, non critical paths.

- Where AI must be paired with mandatory human review, for example auth flows, payment handling, PII processing.

- Which data must never be sent to AI tools, for example real customer PII, production secrets, regulated datasets.

This is how you keep AI software development risks under control without losing the benefits.

Strengthen your security review process

To handle AI code security risks at scale, teams need stronger security pipelines:

- Assign security champions in each team.

- Use automated tools like SAST, DAST, and dependency scanners.

- Add security checkpoints in CI CD pipelines.

- Require human approval for deployments involving sensitive data or critical components.

Vibe coding limitations become manageable when your review process is designed for higher velocity. For a starting point, see our code review checklist.

Invest in education and awareness

Your engineers are your first line of defense. Train them to recognize AI code security risks and common pitfalls:

- Hardcoded secrets and unsafe configs.

- Insecure input handling and missing auth checks.

- Unsafe logging of PII or secrets.

Once developers understand what vibe coding meaning really implies, they adopt safer habits, such as always checking AI suggestions for security issues before merging.

Next steps

- Run a one-week audit on your highest-traffic agent’s token usage and retry rate.

- Add a per-task budget cap and an early-stop rule before scaling further.

- Book a 30-minute call with a Teamvoy delivery lead to benchmark your stack.

For raw signal from practitioners, r/MachineLearning and the Stanford AI Index Report are worth bookmarking.

Conclusion

Vibe coding is not the enemy. Ungoverned, insecure software is. Whether code comes from a senior engineer or an AI model, your organization is still responsible for protecting data, meeting compliance obligations, and maintaining cyber resilience. The method of code creation is secondary to the security, compliance, and reliability of the final system. Vibe coding meaning for serious businesses must always include secure AI assisted development, not “prompt and ship.”

To recap:

- AI accelerates code creation, not engineering judgment, security, or compliance.

- The Moltbook leak is a process failure, not an AI failure, and the same pattern can hit any team without security gates.

- The fix is policy, ownership, and a security lifecycle designed for AI volume, not a ban on AI.

“Before you let AI write production code, let’s talk about your security review process. We’ll show you how we build agent-assisted systems that don’t compromise on safety.”