Key takeaways:

Choosing an AI vendor for fintech in 2026 is a compliance and architecture decision before it’s a pricing decision. Score every candidate on three criteria in order — compliance readiness (explainability and audit trails), vendor lock-in risk (open formats, no egress fees), and true total cost of ownership beyond API tokens — and treat regulatory alignment, model risk management, and bank-grade security as strict filters, not bonus points. For regulated decisions like trade surveillance, AML, KYC, and credit scoring, a hybrid approach (off-the-shelf base model plus a custom compliance layer) usually beats both pure build and pure buy. Getting the choice wrong typically costs $150,000–$500,000 in switching costs and compliance rework within 18 months (Teamvoy internal delivery data, 12 fintech engagements, 2023–2025).

- Define 1 or 2 high-value use cases with clear owners, data constraints, and KPIs before talking to any AI vendor for fintech.

- Decide what type of vendor you need — document AI, fraud analytics, generative AI, or a custom engineering partner — and whether you prefer one platform or a composable stack.

- Use a weighted scorecard where business fit, security, and compliance together carry at least 30% of the decision weight.

- Treat regulatory alignment and bank-grade security as strict filters, not “nice to have” bonuses — verify each with written evidence and live references.

- Use fintech-specific RFPs, structured pilots on real data, and lock-in-aware contracts to manage risk and keep future options open.

Introduction

Choosing an AI vendor for fintech in 2026 is a compliance and architecture decision before it’s a pricing decision. This article covers a three-criterion scoring framework (compliance, lock-in, TCO), a build vs. buy vs. hybrid matrix, a 12-question due-diligence checklist, US and Nordic procurement differences, a 30/60/90-day PoC playbook, and a vendor-type comparison. Regulated use cases — trade surveillance, AML, KYC, credit scoring — usually call for a hybrid path: a base model plus a custom compliance layer.

Why does AI vendor choice matter more in 2026?

AI vendor choice matters more in 2026 because AI now sits inside the decisions that regulators, auditors, and customers will judge you on — KYC approval, AML alerts, credit decisions, fraud scores — and the question has shifted from “should we use AI?” to “can we defend how this decision was made, three years from now?”

Where AI is now embedded in fintech workflows:

- KYC and customer onboarding.

- AML transaction monitoring and alert triage.

- Fraud detection and anomaly scoring.

- Credit scoring and AI-powered credit decisioning in fintech lending.

- Collections strategy and next-best-contact.

- Invoice processing and reconciliation.

- Customer support chatbots and internal employee assistants.

- Trade surveillance and market abuse detection.

Generative AI and LLMs now sit inside many of these workflows. That brings new risks — hallucinations, bias, explainability gaps, and data leakage — into systems that previously ran on deterministic rules. Regulators have responded. The EU AI Act treats most financial use cases (credit scoring, AML, KYC) as high-risk, which means documented training-data lineage, model cards, and human oversight. DORA sharpens third-party ICT risk and vendor resilience expectations. NYDFS Part 500, FINRA, and SEC guidance in the US push the same direction.

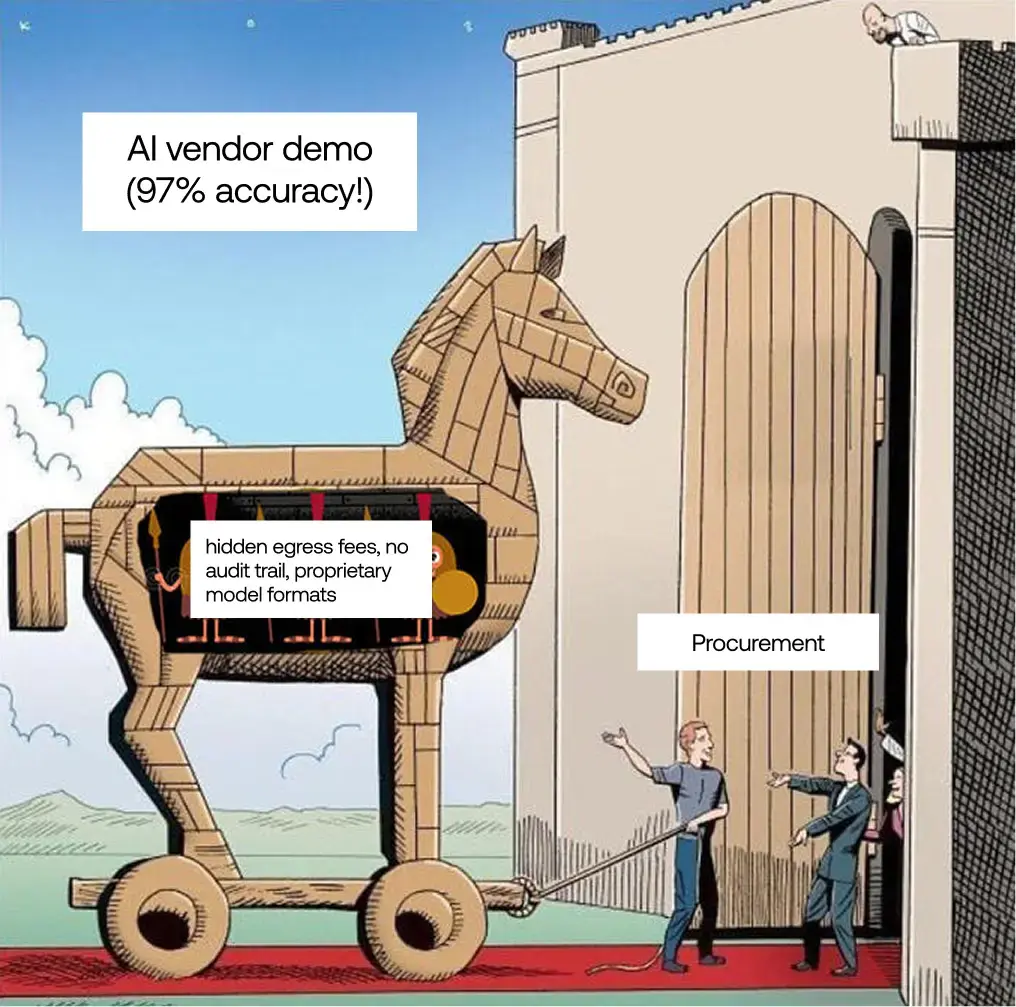

Two patterns we see consistently in fintech vendor evaluations:

- A vendor is picked on demo accuracy, then killed by compliance six months later because the model can’t be explained — and the team starts over.

- Lock-in only becomes a conversation when the team tries to leave: data is in proprietary formats, prompts can’t be exported, and egress fees turn a 6-week migration into a 6-month one.

The symptoms of a bad fit show up late:

- Compliance kills the project after months of integration work because outputs can’t be explained.

- Switching providers costs 3–4x more than expected because data and prompts are in proprietary formats.

- Year-two run-rate is 2–3x the original quote once prompt maintenance, fine-tuning, and integration fixes are added in.

- Regulators ask for an audit trail that the vendor’s API was never designed to produce.

The cost of ignoring this is concrete. FINRA’s 2026 Annual Regulatory Oversight Report states plainly that “firms are responsible for their regulatory obligations regardless of whether humans or machines execute them.” If your vendor can’t show its work, you are the one explaining it to the regulator.

The financial exposure is also documented. IBM’s Cost of a Data Breach report places the average breach cost in financial services above $5M per incident — well ahead of most other sectors. When the vendor in question touches KYC, AML, fraud, or core banking flows, that number sits on your P&L, not theirs.

Choosing an AI vendor for fintech in 2026 is a strategic risk decision, not a software buy. The right vendor has to balance innovation with bank-grade security, model risk management, third-party risk management (TPRM), and regulatory alignment — and prove it with evidence, not slides. A few years ago, the question we heard most from fintech clients was, “Can you automate this process?” Now it’s “can we show our regulator how this decision was made, three years from now?” The framework in the next section is built around that second question.

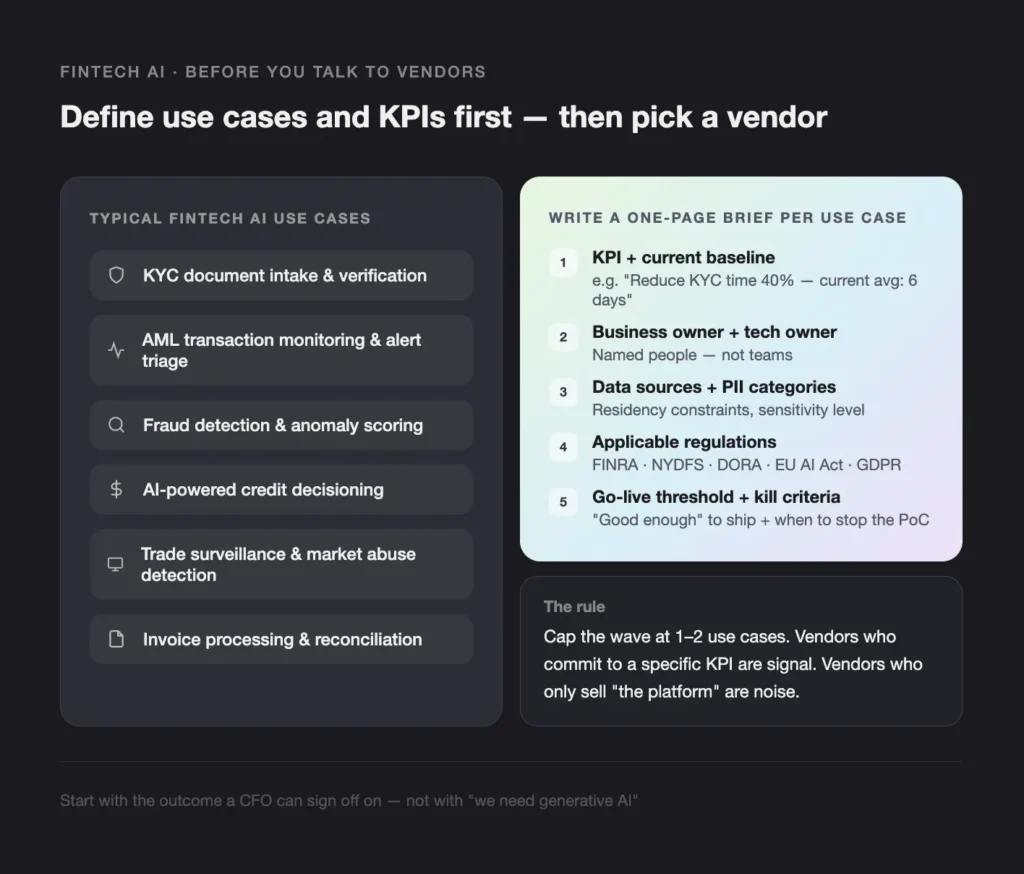

Define your fintech AI use cases and KPIs before you talk to any vendor

Start with one or two concrete use cases tied to measurable KPIs, not with a vendor category. The fastest way to get lost in the 2026 AI landscape is to lead with tools — “we need generative AI” or “we need a top AI vendor for fintech” — instead of an outcome a CFO can sign off on.

Frame each use case as a business problem, not a feature:

- “Reduce KYC onboarding time by 40% without increasing rejection rate.”

- “Cut AML alert false positives by 30% with no increase in missed SARs.”

- “Lift pre-authorization fraud catch rate by 20 percentage points at the same false-positive rate.”

- “Bring invoice processing cost per document below $0.10 with 99%+ field accuracy.”

Typical fintech AI use cases worth scoping first:

- KYC document intake and verification.

- AML transaction monitoring and alert triage.

- Fraud detection and anomaly scoring (card, ACH, cross-border payments).

- AI-powered credit decisioning in lending and BNPL.

- Collections optimization and next-best-contact.

- Invoice processing and reconciliation.

- Customer support chatbots and internal employee assistants.

- Trade surveillance and market abuse detection.

For each shortlisted use case, write a one-page brief before any vendor call. Include:

- The KPI you’re trying to move and the current baseline.

- The named business owner and the named tech owner.

- The data sources, residency constraints, and PII categories involved.

- The regulators and frameworks that apply (FINRA, NYDFS Part 500, DORA, EU AI Act, GDPR — pick the real ones).

- The “good enough” threshold for go-live and the kill criteria for the PoC.

Trade-off to name: cutting the wave to 1–2 use cases means saying no to four other stakeholder requests. Do it anyway. Vendors who can quote a clear win on a tightly scoped use case are signal; vendors who only sell a “platform” without committing to a specific KPI are noise.

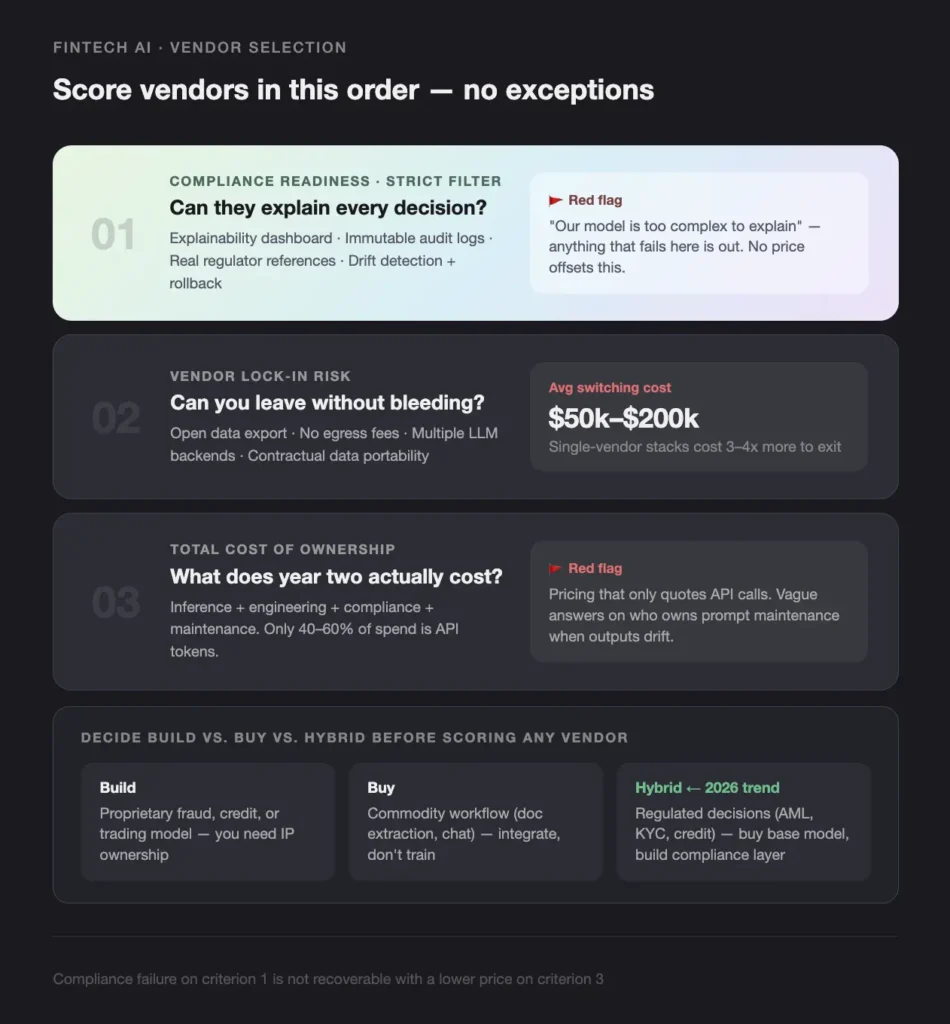

How do you choose an AI vendor for fintech?

Score every vendor on three criteria, in this order: compliance readiness, lock-in risk, then total cost of ownership. Anything that fails criterion one is out — there is no offsetting a black-box model with a low price. Treat the process as AI procurement and third-party risk management (TPRM), not a tooling decision — that framing forces the right artifacts (model risk management documents, vendor questionnaires, exit clauses) into the workflow before legal gets involved.

Criterion 1: Compliance readiness

Ask every vendor:

- Can your model explain every decision in plain language, not just a confidence score?

- Do you maintain immutable audit logs of inputs, outputs, and intermediate steps?

- Have you been used by customers audited by SEC, FINRA, BaFin, FCA, or MAS, and can we talk to them?

- How do you handle model drift detection and rollback?

Red flag: “Our model is too complex to explain” or “Trust us, the accuracy is high.”

Green flag: A working explainability dashboard, a sample audit report from a real deployment, and a clear retention policy on logs.

Criterion 2: Vendor lock-in risk

Ask every vendor:

- Can we export training data, fine-tuned weights, prompts, and evaluation sets in open formats?

- Do you charge egress fees, and what are they?

- Which LLM backends do you support, and can we bring our own?

- What happens to our data and models if we terminate the contract?

The average cost to switch LLM providers mid-project sits in the $50,000–$200,000 range — data migration, prompt rewriting, fine-tuning re-runs, and compliance re-validation (Teamvoy delivery data, 2024–2025; consistent with public switching-cost benchmarks from a16z’s State of AI in Production). Single-vendor stacks report 3–4x higher switching costs than multi-backend architectures.

Red flag: proprietary model formats, egress fees, no written data export path.

Green flag: support for multiple backends (OpenAI, Anthropic, open-source), standardized APIs, contractual data portability.

Criterion 3: Total cost of ownership

Ask every vendor:

- What percentage of your price is model inference vs. engineering support?

- Who owns prompt maintenance when outputs drift?

- What are overage fees for volume spikes?

- What’s included in year two — and what becomes a change order?

Only 40–60% of fintech AI spend is on API tokens. The rest is prompt engineering, fine-tuning, compliance validation, and integration maintenance. Per-token comparisons routinely miss 2–3x of the real cost. (Teamvoy AI TCO benchmark, 2025; aligned with patterns reported in Andreessen Horowitz and Sequoia LLM economics analyses.)

Red flag: pricing that only quotes API calls. Vague answers on maintenance ownership.

Green flag: line-itemed inference, engineering, and compliance costs. Fixed-cost options for predictable volumes.

Build vs. buy vs. hybrid: a decision matrix

Use this matrix to decide before scoring vendors. Buying the wrong layer is more expensive than buying the wrong vendor.

| If your AI use case is… | Then… | Why |

|---|---|---|

| Core differentiator (proprietary fraud, credit, trading model) | Build with an engineering partner | You need ownership of IP and model weights |

| Commodity workflow (document extraction, summarization, chat) | Buy an API-first vendor | Spend engineering on integration, not training |

| Regulated decision (trade surveillance, AML, KYC, credit) | Hybrid — buy the base model, build the compliance layer | Off-the-shelf vendors rarely ship the explainability and audit controls regulators expect |

The 2026 trend: regulated fintechs are moving from “buy” to “hybrid.” Compliance requirements for AI decisions have outgrown what most off-the-shelf vendors ship.

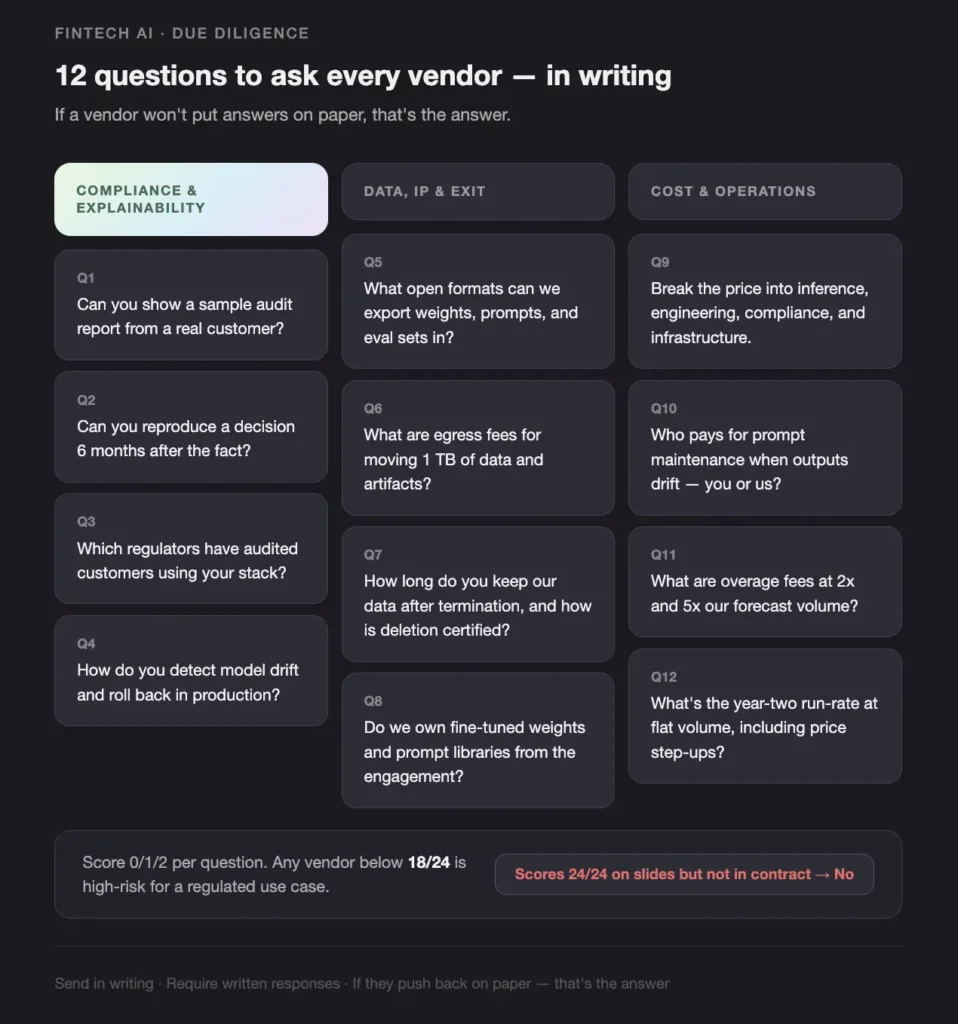

What questions should you ask every AI vendor before signing?

Send this 12-question due-diligence list to every shortlisted vendor in writing, and require written answers — not a sales-deck walk-through. If a vendor pushes back on putting answers on paper, that’s the answer.

Compliance and explainability

- Show us a sample audit report you’ve produced for a real customer (PII redacted).

- For a given decision, can you reproduce inputs, intermediate steps, output, and confidence six months after the fact?

- Which financial regulators have your customers been audited by while using your stack? Can we speak to two of them?

- How do you detect model drift, and what’s the rollback procedure when accuracy degrades in production?

Data, IP, and exit

- List every data export format you support, including fine-tuned weights, prompts, and evaluation sets.

- What are the contractual egress fees for moving 1 TB of training data and model artifacts?

- If we terminate, how long do you keep our data, and how is deletion certified?

- Do we own derivative IP (fine-tuned weights, prompt libraries) created during the engagement?

Cost and operations

- Break the quoted price into model inference, engineering hours, compliance support, and infrastructure.

- Who pays for prompt maintenance and re-tuning when outputs drift — you or us?

- What are overage fees at 2x and 5x our forecast volume?

- What’s the year-two run-rate at flat volume, including any expected price step-ups?

A useful pattern: score answers on a simple 0/1/2 scale across all 12 questions. Any vendor below 18/24 is a high-risk choice for a regulated use case. Any vendor that scores 24/24 on the slide deck but won’t repeat it in the contract is a no.

How do US and Nordic fintech AI vendor requirements differ?

US fintechs typically optimize for speed-to-production and US regulator readiness (SEC, FINRA, NYDFS Part 500, FFIEC). Nordic fintechs optimize for documentation depth, EU regulatory alignment (DORA, MiCA, GDPR, the EU AI Act), and data residency in the EU. Both groups care about compliance, but they prove it differently.

| Dimension | US fintech expectation | Nordic fintech expectation |

|---|---|---|

| Primary regulatory frame | SOC 2 Type II, FINRA, SEC, NYDFS Part 500, FFIEC | DORA, MiCA, GDPR, EU AI Act, local FSA / Finansinspektionen requirements |

| Data residency | US-region cloud, often acceptable | EU-region (Frankfurt, Stockholm, Helsinki) required, often by contract |

| Model risk documentation | Internal model risk policy, sometimes light | Full model card, intended use, training data lineage, third-party assessment |

| Procurement cycle | 6–10 weeks for a regulated use case | 12–20 weeks — legal, DPO, and security review in parallel |

| Currency for TCO | USD | EUR / SEK / NOK / DKK — quote in local currency, not converted |

| Language for audit artifacts | English | English plus, increasingly, a Swedish or Norwegian executive summary |

Practical implications when picking a vendor:

- A vendor that’s only run inference in us-east-1 will not survive a Nordic procurement security review. Confirm EU region support before the technical PoC.

- DORA’s third-party ICT risk requirements push more burden onto your vendor’s contracts. Build the right-to-audit and exit clauses in early.

- US fintechs can often start with a six-week PoC. Nordic fintechs should expect to spend the first four weeks of the PoC on documentation and DPO sign-off before any code runs.

- Don’t reuse a US-only vendor’s compliance pack in a Nordic procurement. Map every claim back to the relevant EU regulation by name.

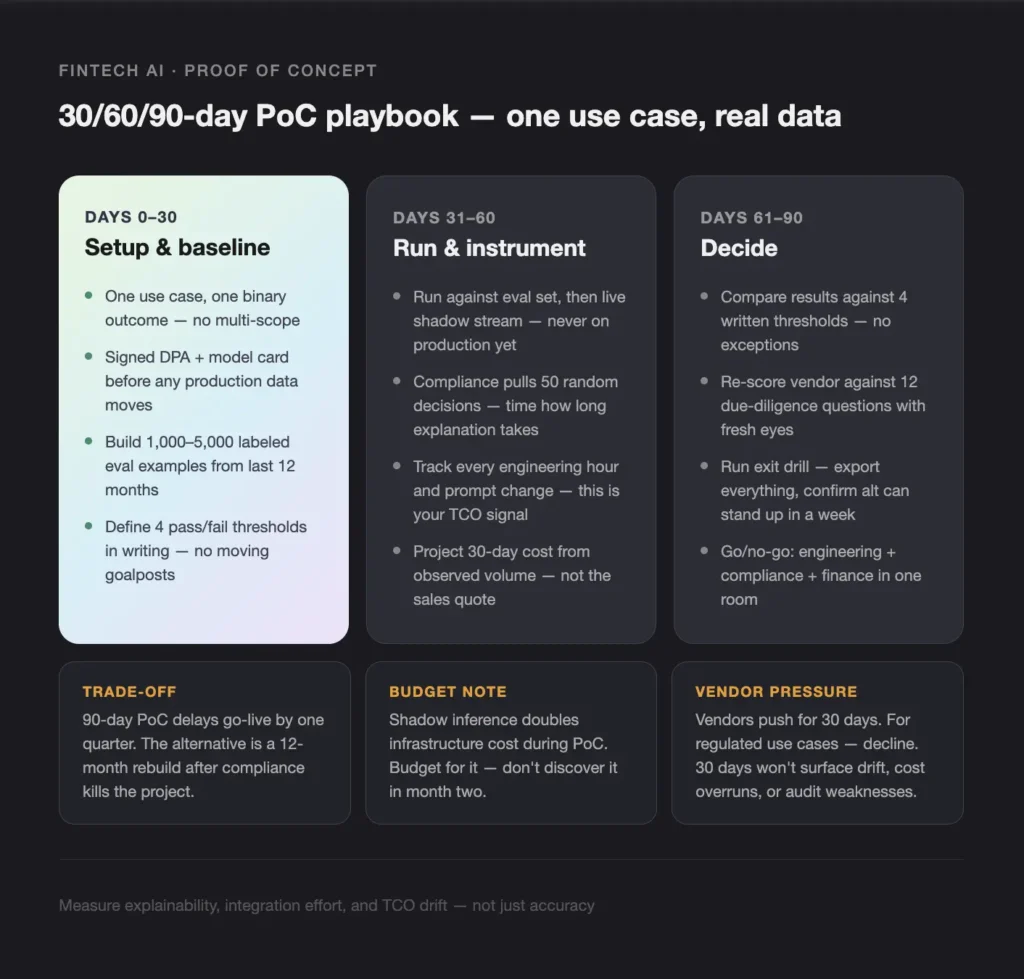

How do you run an AI vendor proof of concept in fintech?

Run a structured 30/60/90-day PoC on a single regulated use case with your real data, your real compliance requirements, and a single named owner on each side. Measure explainability, integration effort, and TCO drift — not just accuracy.

Days 0–30: Setup and baseline

- Pick one use case with a clear binary outcome (e.g., “flag this transaction for AML review: yes/no”). No multi-use scope.

- Get a signed DPA, data processing inventory, and a written model card from the vendor before any production data moves.

- Build a gold-standard evaluation set of 1,000–5,000 labeled examples from your last 12 months.

- Define the four PoC pass/fail thresholds in writing: minimum precision/recall, maximum decision latency, audit-log completeness, and integration effort budget in person-days.

Days 31–60: Run and instrument

- Run the vendor model against the evaluation set, then against a live shadow stream in your environment — never on production decisions yet.

- Have compliance pull 50 random decisions and ask the vendor to explain each one. Time how long it takes and rate the answers.

- Track every engineering hour, every prompt change, every integration fix. This is your real TCO signal.

- Pull a 30-day projected cost based on observed volume and overage behavior, not the sales quote.

Days 61–90: Decide

- Compare actual results against the four written thresholds. No moving goalposts.

- Score the vendor against the 12-question due-diligence list above with fresh eyes after working with them.

- Run an exit drill: export everything and confirm you can stand up an alternative in a week.

- Bring the scorecard, the exit-drill result, and the projected year-two TCO to a single go/no-go meeting with engineering, compliance, and finance in the room.

Trade-offs to name out loud:

- A 90-day PoC delays go-live by a quarter. The alternative is a 12-month rebuild after compliance kills the project.

- Running shadow inference doubles infrastructure cost during the PoC. Budget for it.

- Vendors will push for a 30-day PoC. For regulated use cases, decline — 30 days is not long enough to surface drift, cost overruns, or audit weaknesses.

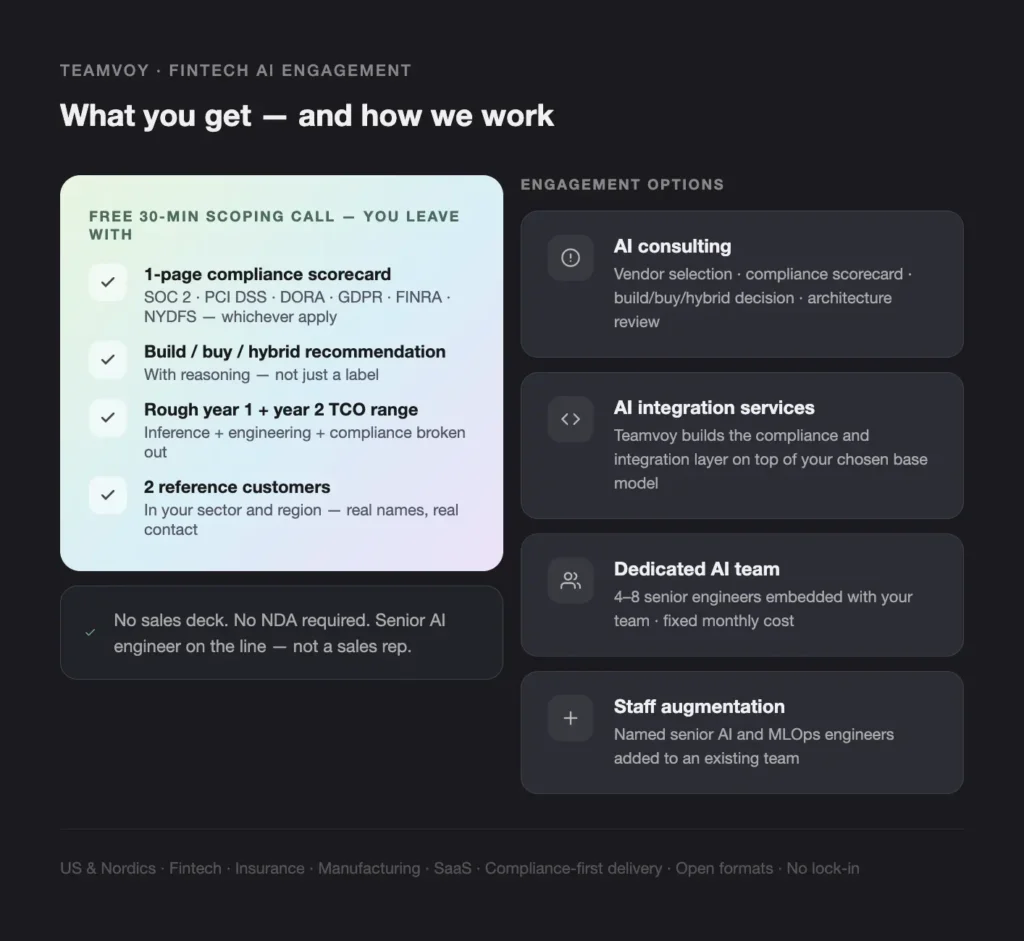

When should you hire Teamvoy (or a partner like us) to deliver this?

Hire a custom or hybrid AI engineering partner when your use case is regulated, your data can’t leave your environment, and you can’t accept a black-box vendor. Stay with an off-the-shelf API vendor when the workflow is commodity, non-regulated, and low volume. Teamvoy’s AI consulting practice covers the strategy, AI procurement, and model risk management work; AI integration services handle the build and compliance layer on top — including the audit-log, explainability, and TPRM artifacts regulators expect.

Who are the best AI vendors for fintech, by vendor type?

There is no single “best” AI vendor for fintech — there are three vendor types, each best for a different fit. Score each type against your use case before naming names.

| Vendor type | Compliance depth | Lock-in risk | TCO transparency | Enterprise scale | Best fit |

|---|---|---|---|---|---|

| Custom / hybrid build (e.g., Teamvoy) | Full — audit trails, explainability, regulator-ready docs | None — you own IP and use open formats | Transparent — fixed-cost engineering | Yes — stock exchange, 7-bank consortium, 400k+ users | Regulated fintechs needing compliance and scale |

| Off-the-shelf API vendor | Limited — typically black-box | High — egress fees, proprietary formats | Hidden — token economics dominate | Variable — often rate-limited | Non-regulated or low-volume workflows |

| Global consultancy (SoftServe, N-iX, EPAM, etc.) | Moderate — costly to deepen | Medium — proprietary tooling, long contracts | Opaque — time-and-materials with change orders | Yes — but slow | Enterprises with long timelines and budget headroom |

Why Teamvoy

- Stock exchange: 5+ years of trade surveillance migration across 30 institutions, millions of daily transactions, 99.9%+ uptime — read the trade surveillance re-engineering case study.

- African banking consortium: 7 banks, 400,000+ users, API-first modernization.

- Fintech platform: 30–40% faster delivery using AI-native engineering practices.

- Banking app: 10x more requests per second, 5x faster response after a structured refactor.

How do you work with Teamvoy on a fintech AI project?

You start with a free 30-minute scoping call with a senior AI engineer at teamvoy.com/contact-us. Teamvoy is a custom and hybrid AI engineering partner for regulated fintechs in the US and Nordics — compliance-first delivery, open formats, no vendor lock-in.

What you get on the first call:

- A 1-page compliance scorecard for your use case across SOC 2, PCI DSS, PSD2, DORA, MiCA, GDPR, FINRA, and NYDFS Part 500 — whichever apply.

- A build / buy / hybrid recommendation with reasoning.

- A rough TCO range across model inference, engineering, and compliance for years 1 and 2.

- 2 reference customers in your sector and region.

No sales deck. No NDA required for the first call → Book your free 30-minute scoping call

Conclusion

Choosing an AI vendor for fintech in 2026 is a compliance and architecture decision before it’s a pricing decision. Score on explainability and audit readiness first, lock-in risk second, and total cost third. Decide whether the use case is build, buy, or hybrid before you talk to vendors, then run a real proof of concept on your own data with your own compliance requirements.

Next step: get a compliance scorecard for your AI use case

Teamvoy gives fintech CTOs, heads of product, and compliance officers a free 30-minute scoping call. You leave with a 1-page compliance scorecard, a build / buy / hybrid recommendation, a rough year-1 and year-2 TCO range, and two reference customers in your sector → Book your free 30-minute scoping call. No sales deck. No NDA required for the first call. Senior AI engineer on the line, not a sales rep.fety.”