Key takeaways:

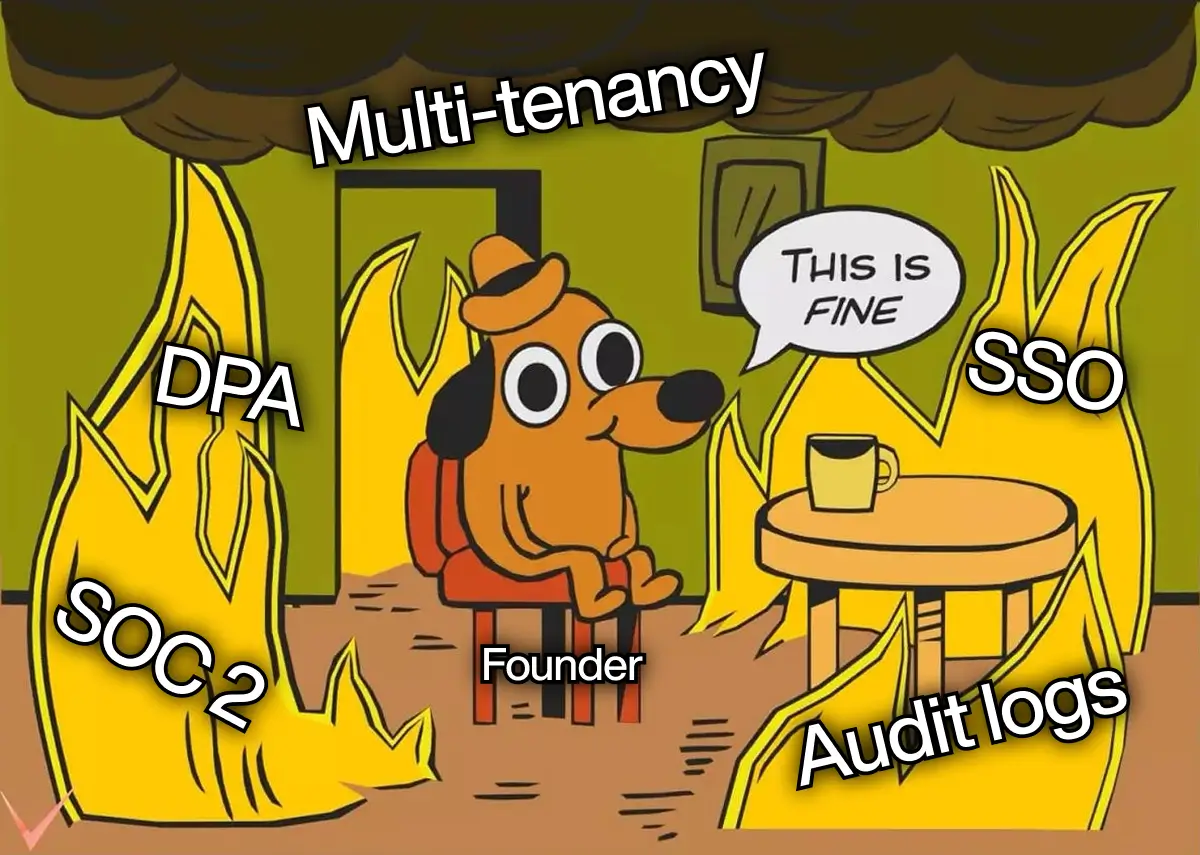

A working model and two closed pilots are not an enterprise-ready product. The gap is a scaffold gap — evals an auditor can read, multi-tenancy that survives a security review, SSO and audit trails the buyer’s CISO expects, and a SOC 2 plan that turns 12 months of dread into 90 days of execution.

This piece names the scaffold and the order to build it.

- Enterprise procurement does not block on model quality. It blocks on evidence the model is operated like infrastructure.

- Multi-tenancy designed late is the most expensive rework most AI-native startups will ever do.

- An eval suite a regulator can read is worth more than a benchmark a researcher can publish.

- SOC 2 is a 12-month problem only if you start it on day 300. Start it on day 30 and the audit is paperwork.

- Most AI-native teams hire a second ML engineer when their next hire should be a platform engineer.

Introduction

A Series A AI-native founder emailed Teamvoy in February: “We closed two pilots and both want SOC 2 and multi-tenancy by Q3.” Two weeks later he sent a photo of a 47-page security questionnaire, three sections highlighted yellow.

The model was fine. The scaffold underneath did not exist yet. This piece is for that founder and that CTO. It names the scaffold between the demo that won the pilot and the production system enterprise security teams will sign on for, and it sequences what to build first.

What does “enterprise-ready” actually mean for an AI-native startup?

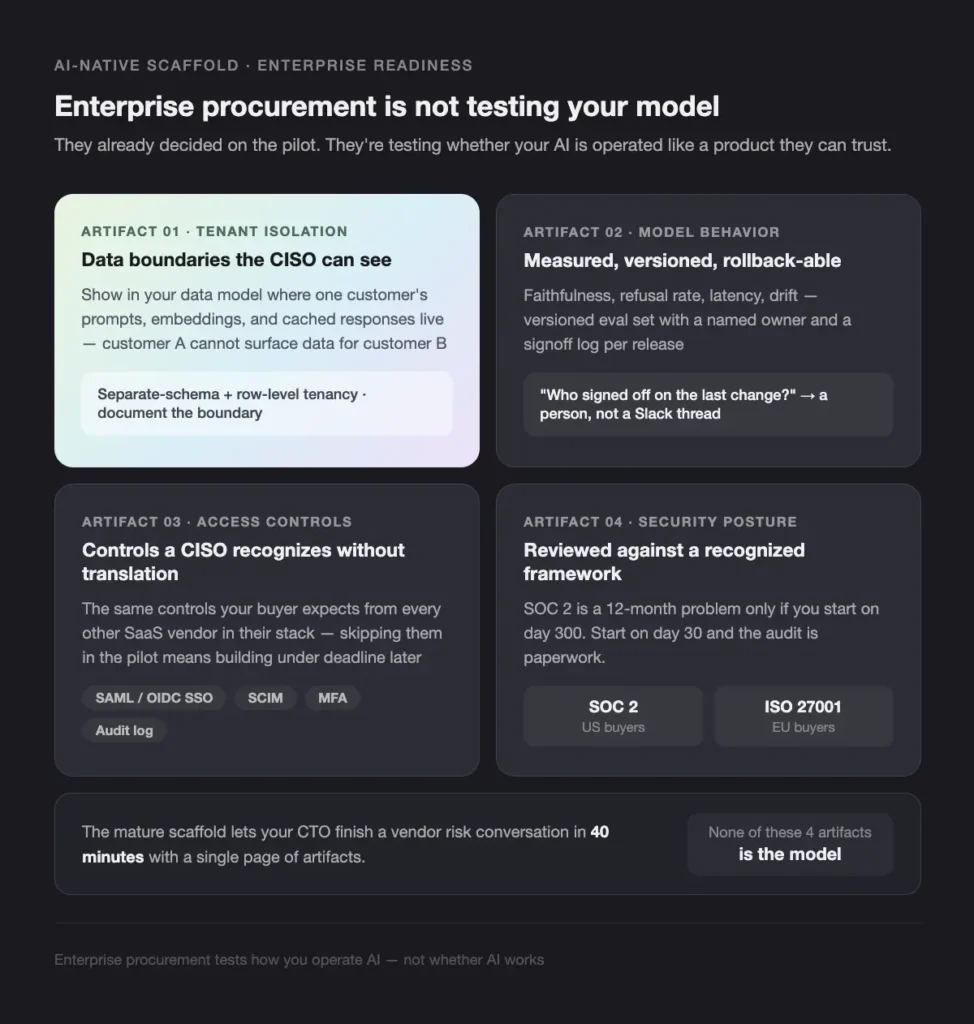

Most founders read “enterprise-ready” as a list of features. It is a list of evidence. Enterprise procurement is not testing whether your AI is good. Your pilot closed; they have already decided. They are testing whether your AI is operated like a product they can trust.

The mature scaffold lets your CTO finish a vendor risk conversation in 40 minutes with a single page of artifacts to walk through. The same failure mode shows up in regulated buyers more broadly, which we covered in Why most AI pilots in fintech fail to reach production — the gap is the operating system around the model, not the model.

Which four pieces of evidence does enterprise procurement actually require?

The formal-looking security questionnaire is, under the formatting, one conversation: a CISO asking for four artifacts. Each is engineering work, and none of them is the model.

Tenant isolation that survives inspection. The buyer wants to see, in your data model, where one customer’s prompts, embeddings, and cached LLM responses live, and how a query for customer A cannot accidentally surface data for customer B. For most Series A AI-native products, separate-schema with row-level tenancy is the right starting point. Whatever you pick, document the boundary.

Model behavior measured, versioned, and rollback-able. Faithfulness, refusal rate, latency budget, drift — measured against a versioned eval set, with a named owner and a signoff log per release. If the buyer’s risk team asks who signed off on the last model change, the answer should be a person, not a Slack thread.

Access controls a CISO recognizes without translation. SAML or OIDC single sign-on, SCIM for user provisioning, multi-factor authentication, and an audit log the buyer can inspect. These are the same controls the buyer expects from every other SaaS vendor in their stack. The cost of skipping them in the pilot is the cost of building them under a deadline later.

Security posture reviewed against a recognized framework. SOC 2 Type I readiness is enough to sign an enterprise pilot at most US buyers; Type II is the renewal conversation a quarter or two later. ISO 27001 is more common with European buyers. Pick one; do not skip.

How do you build the scaffold without stopping product velocity?

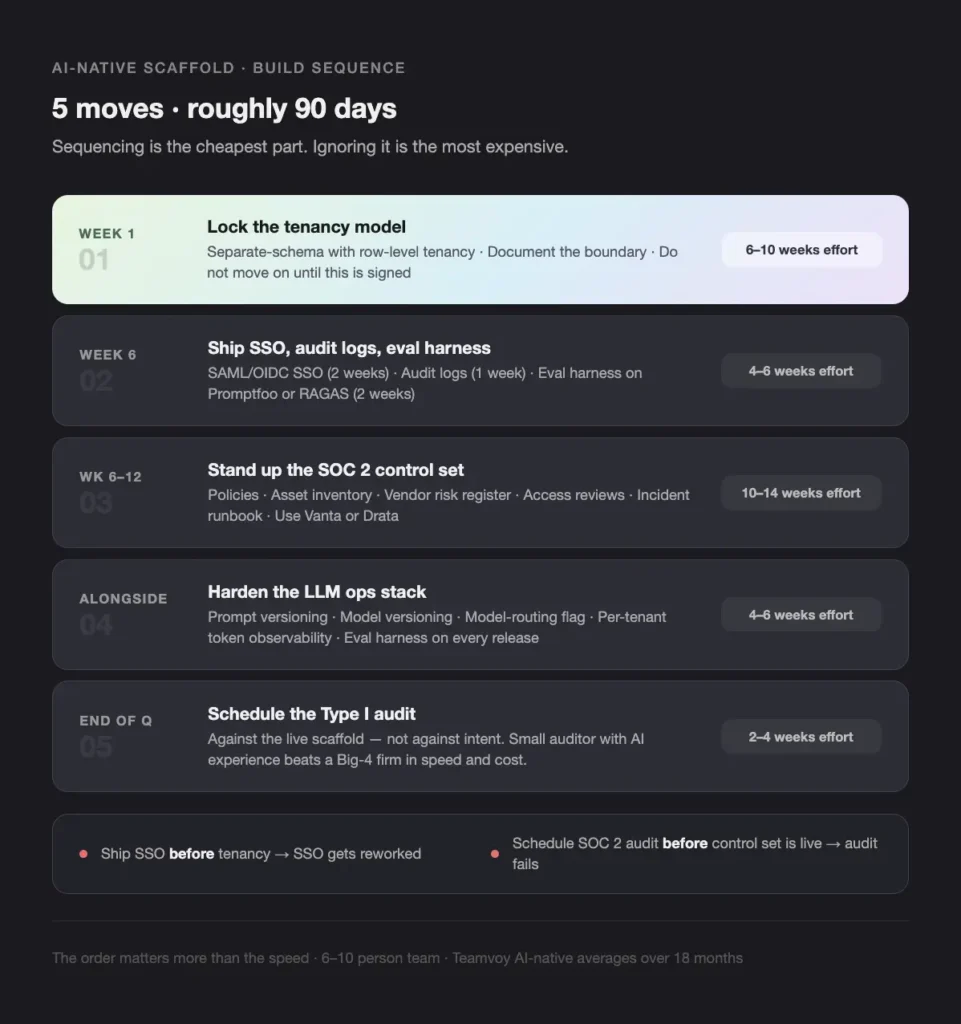

Sequencing is the cheapest part; ignoring it the most expensive. Most teams try multi-tenancy and SOC 2 in parallel, lose focus, ship neither.

Five moves, roughly 90 days.

- Lock the tenancy model in week one. Separate-schema with row-level tenancy is the right starting point for most AI-native B2B products. Document the boundary.

- Ship SSO, audit logs, and a first-pass eval harness by week six. SAML/OIDC SSO is two weeks; audit logs are one; an eval harness on Promptfoo or RAGAS is two. See our LLMOps tooling reference.

- Stand up the SOC 2 control set in weeks six–twelve. Policies, asset inventory, vendor risk register, access reviews, incident runbook. Use Vanta or Drata.

- Harden the LLM ops stack alongside it. Prompt versioning, model versioning, model-routing flag, per-tenant token observability. The eval harness now runs on every release. The hidden run-cost traps appear here.

- Schedule the Type I audit against the live scaffold. A small auditor with AI experience is a fraction of a Big-4 firm, and faster.

Current state vs target:

| Capability | Series A demo state | Enterprise-ready state | Effort (weeks) |

|---|---|---|---|

| Tenancy | Single shared DB, no isolation | Schema- or DB-level isolation, documented boundary | 6–10 |

| Identity / access | Email + password, manual user adds | SSO (SAML/OIDC), SCIM, MFA, audit log | 4–6 |

| Model evaluation | Manual spot-checks | Versioned eval set, automated on each release | 6–8 |

| Observability | Application logs only | Per-tenant token, latency, refusal, drift metrics | 4–6 |

| Security framework | No formal posture | SOC 2 Type I readiness with policies + control owners | 10–14 |

| Vendor risk | Ad-hoc subprocessor list | Maintained register with DPA / sub-processor agreements | 2–4 |

These are calendar weeks on a 6–10 person team. Teamvoy AI-native averages over 18 months.

The order matters more than the speed. Ship SSO before tenancy and SSO gets reworked. Schedule a SOC 2 audit before the control set is live and the audit fails.

When does it make sense to bring in an outside team?

The Series A–B pattern: the founding team is excellent on model and agent work and has never shipped a multi-tenant B2B SaaS. A fourth ML engineer stacks further away from the gap. The right hire is a platform engineer, a security engineer, or an embedded team with the scaffold patterns in hand.

A senior nearshore engineer on the scaffold for two quarters runs roughly USD 60K–110K all-in (EUR 56K–103K) — less than a loaded US senior platform hire, and faster to onboard. The trade is knowledge-handover discipline; Teamvoy treats the runbook and the architecture doc as deliverables. Some work stays in-house: the fine-tuning loop, eval-set definition, agentic workflow logic. That is product surface.

The scaffold underneath — tenancy, identity, observability, security policy — is the layer an outside team can compress, because almost every line is a known engineering problem with known patterns. The signal the moment has arrived: the founder is writing security-questionnaire responses at 11pm and product velocity has dropped.

What does success look like at the end of 90 days?

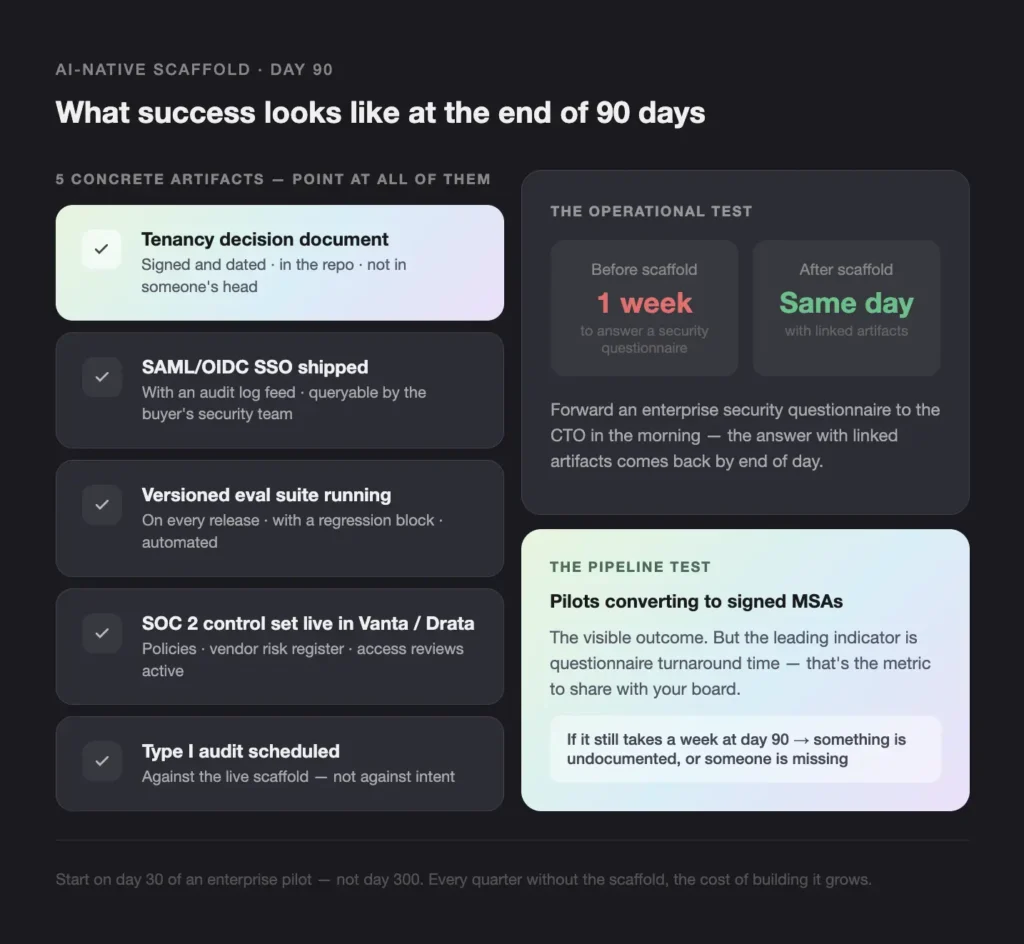

A founder running this plan should be able to point at five concrete artifacts at the end of quarter:

- A Type I audit scheduled against the live scaffold rather than against intent.

- A tenancy decision document signed and dated, in the repo.

- A SAML/OIDC SSO integration shipped, with an audit log feed.

- A versioned eval suite running on every release, with a regression block.

- A SOC 2 control set instantiated in Vanta or Drata, with policies, vendor risk register, and access reviews live.

The operational test is sharper. Forward an enterprise security questionnaire to the CTO in the morning; the answer with linked artifacts should come back by end of day. If the questionnaire still takes a week to answer at day 90, the scaffold is not done — something is undocumented, or someone is missing.

The pipeline test is downstream. Pilots converting to signed MSAs is the visible outcome, but the leading indicator is questionnaire turnaround time. That metric is also the cleanest signal to share with your board.

How does Teamvoy help AI-native startups ship the scaffold?

Teamvoy embeds with AI-native engineering teams to build exactly the scaffold this piece describes — tenancy, identity, evals, observability, and the SOC 2 control set — without taking ownership of the product layer that should stay with the founders. The engagement model is senior-led, long-lived, and explicitly designed around a knowledge-handover deliverable. When the engagement closes, the in-house team owns a documented runbook, a working eval suite, and an architecture doc that survives the next quarter without us.

The delivery team works across fintech and AI-native engagements in the United States and the Nordics, with regulator-surface fluency across SOC 2, SR 11-7, the EU AI Act, NYDFS Part 500, and DORA. Teamvoy’s three pillars run through every engagement: AI transformation (not AI tourism), engineering depth (not just prompt engineering), and regulated-industry fluency. If you are sitting on closed enterprise pilots and want to scope the 90-day scaffold against your specific stack, book a delivery review.

Conclusion

The AI-native companies that turn closed pilots into renewed contracts are not the ones with the best models. They are the ones whose CTOs show a vendor risk team a dated, evidence-based scaffold in 40 minutes.

Start on day 30 of an enterprise pilot, not day 300. The compounding favours the early start: every quarter you do not build the scaffold, the cost of building it grows.